⚡ Quick Answer

AI training data from surveys often comes from preference questions, ranking tasks, and feedback forms that companies can repurpose to improve models or related decision systems. The ethical problem is that many users don't realize they're performing unpaid data-labeling work, often under vague consent language.

AI training data from surveys rarely announces itself. It shows up as a satisfaction poll, a product quiz, a ranking task, or a breezy request for feedback that feels minor at the time. Not quite. Underneath, the economics are consequential. When companies ask users to compare outputs, explain preferences, or correct responses, they may be gathering the exact human judgment signals that make models better. And too often, they get that labor for little money or none at all.

What is AI training data from surveys and why are people angry about it?

AI training data from surveys is human feedback pulled from forms, polls, ranking tasks, and response comparisons that can sharpen AI systems. People are upset because the work feels casual while acting like unpaid annotation or preference labeling. A multiple-choice survey that asks which answer feels more helpful, safer, or more human can feed straight into reward modeling, ranking pipelines, or evaluator tuning. That's not abstract guesswork. Google and OpenAI, for example, have both publicly discussed feedback loops, pairwise comparisons, and user signals that refine systems. The issue isn't that feedback exists. It's that many interfaces hide the labor value of that feedback and tuck future reuse into broad consent terms. We'd put it bluntly: when firms package productive labeling work as routine engagement, users have a real reason to feel played. And that anger gets sharper when those same firms talk up automation for jobs built on human judgment. That's a bigger shift than it sounds.

Are surveys used to train AI in practice?

Yes, surveys are used to train AI in practice when they collect preferences, corrections, rankings, or task-specific judgments that fit model improvement workflows. That's how much of modern post-deployment learning works. RLHF, DPO-style preference learning, search ranking systems, recommender tuning, and quality evaluation pipelines all benefit from human comparison data, whether teams get it from paid raters or everyday users. Simple enough. Google, Meta, Microsoft, OpenAI, and a swarm of startups have all described products that fold user feedback into iterative improvement, even if the exact plumbing differs. A customer support tool might ask which draft reply worked best. A writing app might ask users to rate usefulness. Qualtrics-style survey tooling could ask respondents why one summary felt clearer than another. And once those judgments get structured, they turn into valuable training or evaluation signals with very little extra processing. Worth noting.

How companies collect human data for AI through survey design

Companies collect human data for AI through survey design by turning ordinary feedback flows into structured preference capture. The pattern appears in prompts like rank these three options, pick the most helpful answer, rewrite the bad reply, identify the safer response, or explain your choice in a text box. Each action creates labeled data that can train reward models, calibrate judges, or build benchmark sets. Here's the thing. Some firms do disclose broad reuse rights in their terms. But broad legal permission isn't the same thing as informed consent at the moment of collection. A concrete example: user research software may ask participants to compare generated outputs under the banner of improving the experience, while those same responses can later feed AI quality pipelines. The business logic isn't mysterious. Paid expert labeling costs money, while product surveys push part of that cost onto users. That's efficient for companies. Irritating for everyone else. We'd argue that's not trivial.

What are the ethical concerns with AI surveys and unpaid data labeling?

The ethical concerns with AI surveys center on hidden labor, weak consent, lopsided value capture, and the chance of replacing workers with data they unknowingly helped create. That's why this lands harder than a generic privacy dispute. If a company extracts high-value human preference judgments from surveys without clear disclosure or compensation, it blurs the line between user feedback and labor. Labor researchers have raised parallel concerns for years around clickwork, content moderation, and invisible data tasks that prop up AI systems. Think of Amazon Mechanical Turk. The same logic applies here, even if the interface looks friendlier. And there's a measurable incentive behind it because high-quality human labels are expensive, especially in legal review, medicine, or safety evaluation. When firms cut acquisition costs by disguising labeling as feedback, they don't just gather data cheaply. They also pull agency away from the people producing it. Worth watching.

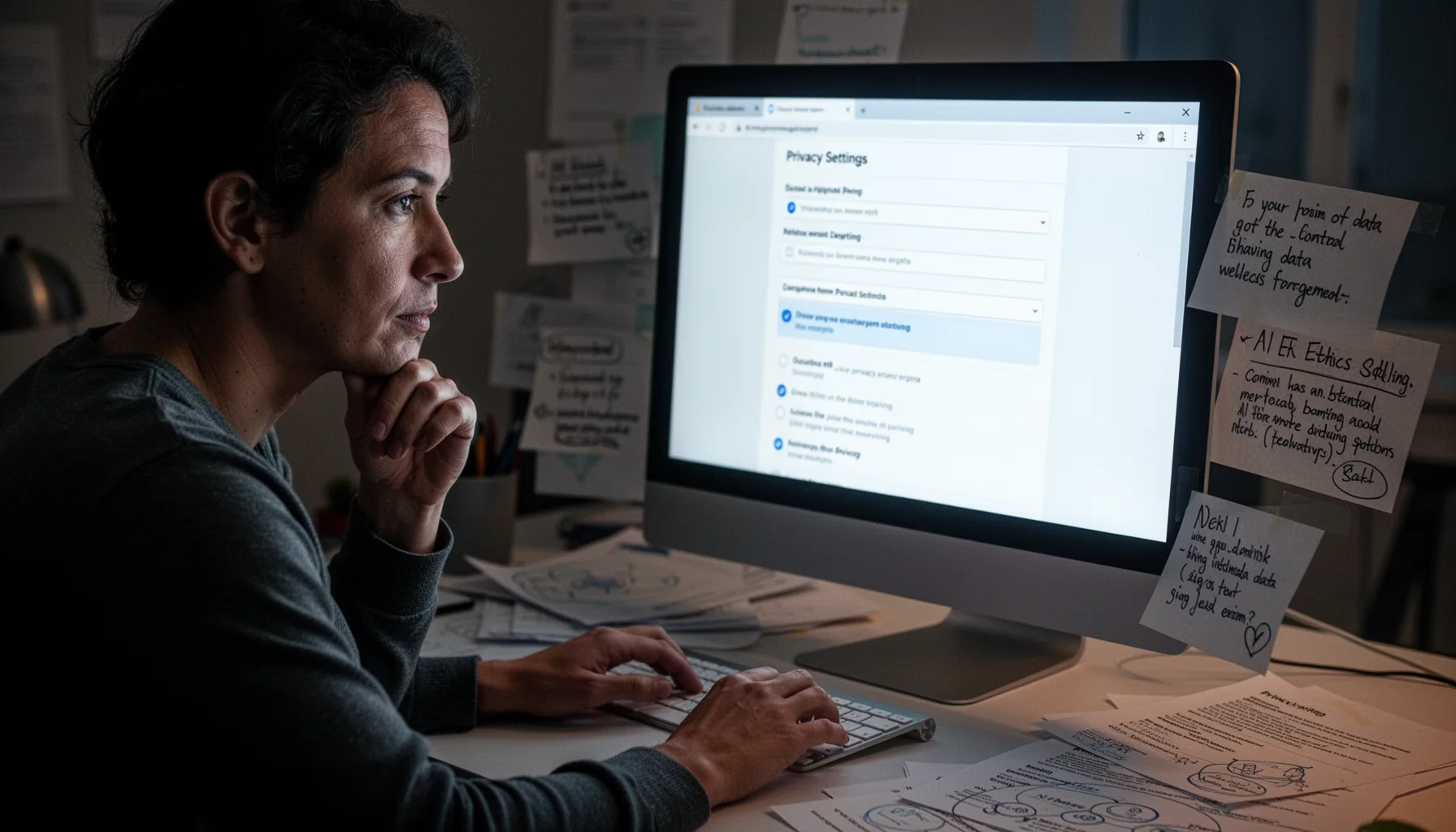

How to opt out of AI training data collection from surveys

You can opt out of AI training data collection from surveys only partly, but a few practical habits can cut how much unpaid signal you hand over. First, inspect consent language for phrases like improve our services, train models, enhance automated systems, share with partners, or retain responses for research. Second, skip optional ranking or free-text explanation fields when the purpose isn't plain. Third, rely on privacy settings, account controls, and region-specific rights requests under laws like GDPR or CCPA where they're available. Adobe, Google, Meta, and Microsoft now expose at least some controls around training or product-improvement data, though the defaults and scope vary a lot. Still, the strongest defense is skepticism. If a survey asks you to compare outputs, rewrite answers, or explain qualitative preferences, treat it as possible labeling work. And if the company won't explain the use in plain language, don't do the work for them. That's our view.

Key Statistics

Frequently Asked Questions

Key Takeaways

- ✓AI training data from surveys often looks like harmless feedback, but it can also function as labeling work

- ✓The core issue is hidden labor, weak consent, and cheap preference data used for model improvement

- ✓Survey prompts that ask you to rank, compare, or correct outputs deserve extra scrutiny

- ✓Users can reduce exposure by checking consent language, avoiding optional detail, and opting out where possible

- ✓Companies save money when they collect human signals through ordinary product flows instead of paid annotation