⚡ Quick Answer

An AI writing automation system with Claude Code works best as an editorial pipeline, not a one-click content machine. Claude Code adds repeatable structure, file-level control, and review checkpoints that make article production faster without flattening voice.

An AI writing automation system with Claude Code only pays off when it acts like an editorial pipeline. That's the real story. Lots of teams can crank out draft copy now, but far fewer can turn rough ideas into publishable articles with steady quality, clear approvals, and a voice that still feels human. We're watching the focus move from prompt fiddling to production design. And that's when Claude Code stops feeling like a chatbot and starts acting like infrastructure.

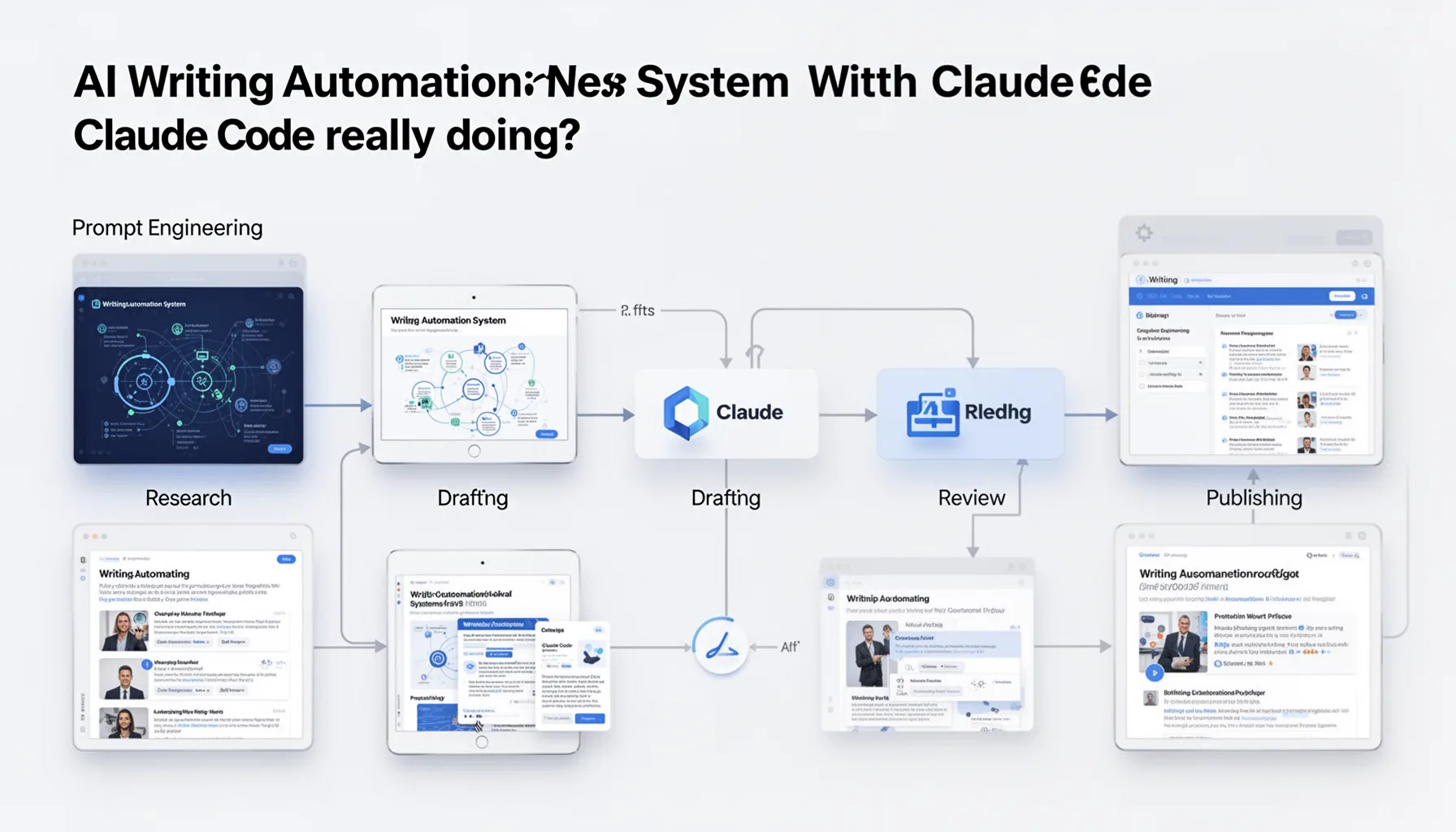

What is an AI writing automation system with Claude Code really doing?

An AI writing automation system with Claude Code works by coordinating research, drafting, review, and publishing through a structured content pipeline. That's the useful frame. Too many posts sell automation as a way to remove editors, but the better aim is making each step less brittle and more repeatable. In our view, the strongest setups treat article production like a newsroom workflow, with inputs, assignment logic, style constraints, and explicit handoffs. Claude Code fits that model well because it can work across files, instructions, and project structure instead of leaning on one oversized prompt in a chat window. That's a practical difference. For example, a content team might keep briefs, brand voice rules, source lists, internal linking targets, and publishing templates in a repo that Claude Code can read and update. Anthropic presents Claude Code as an agentic coding tool for work inside real projects. And that file-aware behavior is exactly what makes it useful for content systems too. We'd argue that's what separates a serious Claude Code content automation workflow from a flashy demo. Worth noting.

How to build an AI writing automation system with Claude Code without losing voice

To build an AI writing automation system with Claude Code without flattening voice, you need controlled variability instead of rigid uniformity. That's the editorial trick. A bland article usually comes from over-standardized prompting, weak source grounding, or no human involvement when the angle gets set. So the pipeline should start with idea intake, where humans decide the thesis, audience, and point of view before any drafting begins. Then Claude Code can assemble a brief, pull approved source files, draft against a style guide, and flag uncertain claims for review instead of faking confidence. The New York Times and Axios rely on strong editorial structures even when automation enters parts of the workflow. And that same rule still holds in smaller AI-first teams. According to the Content Marketing Institute's 2024 B2B benchmarks, 58% of top-performing teams document content processes. That points to why repeatability matters more than raw output speed. My take is simple: voice doesn't survive by accident. It survives because someone designs for it. That's a bigger shift than it sounds.

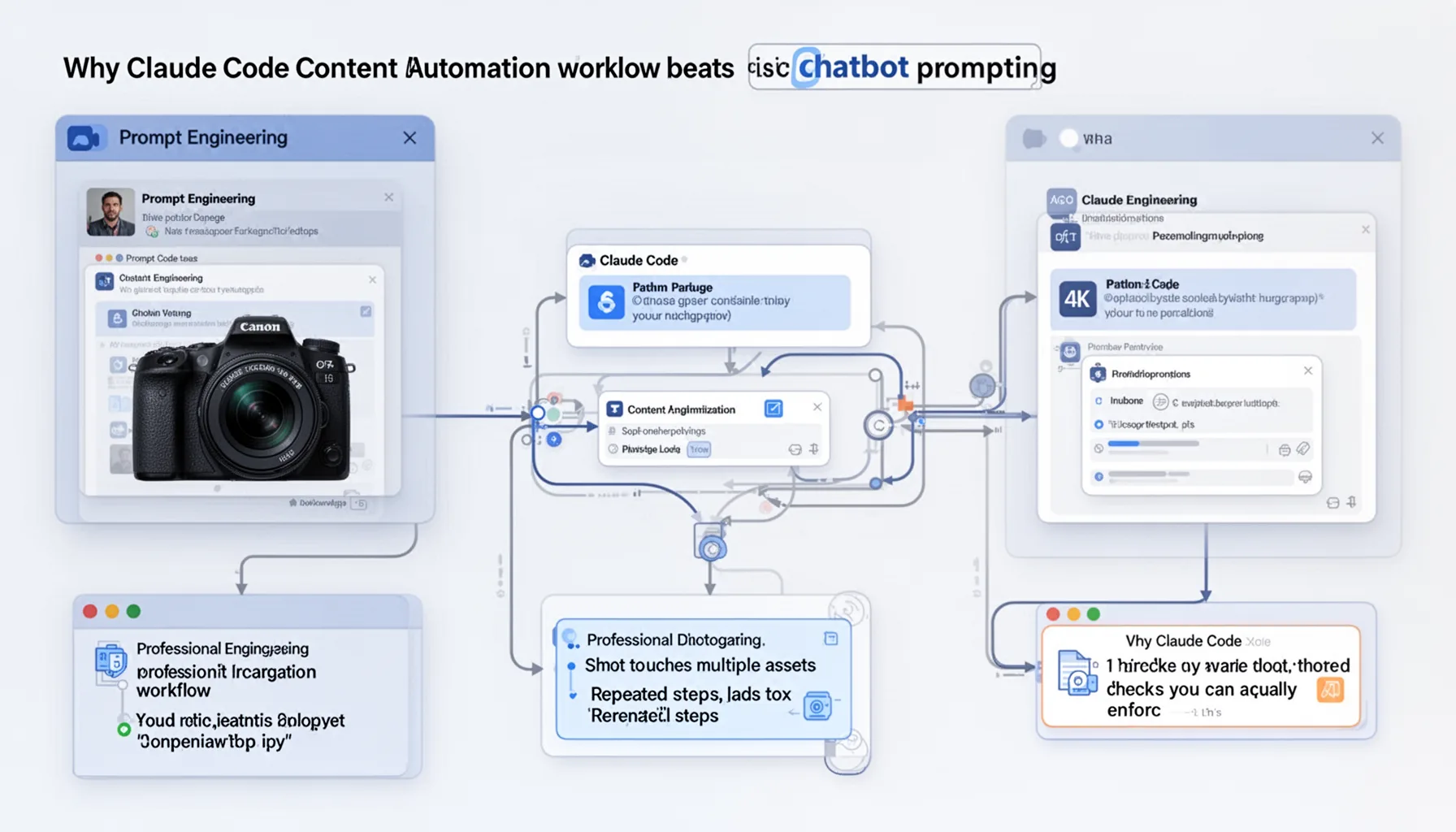

Why Claude Code content automation workflow beats basic chatbot prompting

A Claude Code content automation workflow beats basic chatbot prompting when the job touches multiple assets, repeated steps, and checks you can actually enforce. Here's the thing. Most article production isn't one prompt, one answer, done. It's a chain of operations: title testing, source collection, outline generation, internal link placement, revision history, and publishing controls. Claude Code can work closer to that reality because it can inspect files, update documents, and follow project-specific instructions over time. That gives teams something no-code glue alone often struggles to provide. Durable context. Zapier or Make can trigger actions well, but they don't naturally reason through a repository of editorial rules the way a code-native assistant can. For a concrete example, a team could ask Claude Code to generate a draft, compare it against a house style markdown file, then open a fact-check checklist before moving anything into a CMS-ready folder. We'd say that's a more dependable AI content pipeline using Claude Code than juggling disconnected prompts across browser tabs. Worth noting.

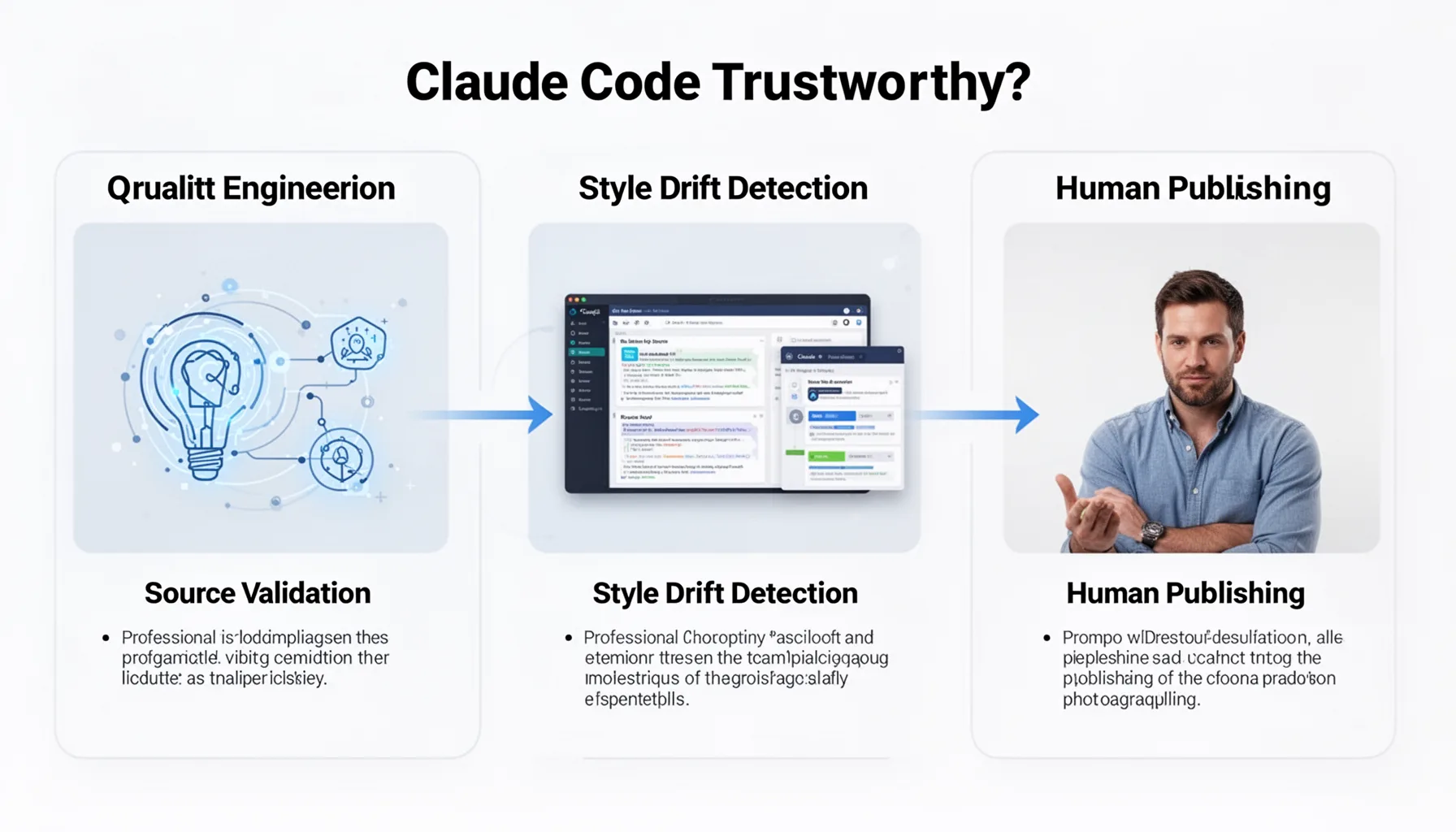

What quality controls make an AI content pipeline using Claude Code trustworthy?

The quality controls that make an AI content pipeline using Claude Code trustworthy come down to source validation, style drift detection, and human publishing gates. Not optional. AI writing tends to fail in familiar ways: it sands down strong opinions, invents details, and slowly drifts away from a publication's tone over repeated runs. So the system needs explicit countermeasures, such as approved-source directories, forbidden claims without citation, article-level voice checks, and a required editor signoff before publication. Google Search guidance on helpful content still rewards people-first pages. And thin AI copy usually loses because it lacks original insight and editorial accountability. A practical setup might score drafts against custom rubrics for specificity, evidence density, and sentence variation before an editor even opens the file. Semrush reported in 2024 that content quality and relevance remain among the strongest drivers of search visibility. That lines up with what operators already know from painful experience. The best AI workflow for writing articles doesn't skip review. It makes review faster and sharper. We'd say that's consequential.

How this AI writing automation system with Claude Code fits a wider content strategy

An AI writing automation system with Claude Code fits a broader content strategy when it supports clusters, internal linking, and editorial planning instead of isolated article output. That's where supporting content earns its keep. For this topic, the smart move is linking back to the broader pillar on Claude Code, Prompting & LLM Builder Workflows, especially topic ID 318, while also connecting to sibling pieces on agentic prompting, repo-aware assistants, and production LLM operations. Claude Code can help enforce that by inserting link opportunities, checking target keywords, and aligning article structure with a topical map stored in project files. HubSpot has spent years making the case that cluster-based publishing improves discoverability. And AI systems now make that architecture easier to maintain at scale. But scale can hide weak thinking. Our read is that the strongest teams use automation to preserve strategy across dozens of articles, not to flood the web with interchangeable posts. That's worth watching.

Step-by-Step Guide

- 1

Define the editorial intake

Start by deciding how ideas enter the system. Use a brief template that captures audience, thesis, target keyword, source expectations, and the one opinion the piece must express. And make a human approve that brief before Claude Code writes anything, because bad inputs create polished nonsense.

- 2

Store your rules in files

Put style guides, brand voice notes, citation rules, formatting templates, and internal linking maps in a repository Claude Code can access. This matters because repeatability comes from persistent instructions, not memory games in a chat box. You'll get better outputs when the system reads the same canon every time.

- 3

Generate structured briefs first

Ask Claude Code to produce an outline, angle statement, FAQ candidates, and source requests before drafting the article. That step catches weak framing early. And it gives editors a cheap place to intervene before the expensive part begins.

- 4

Draft with source constraints

Require Claude Code to draft only from approved notes, linked research, and internal documentation. Tell it to mark uncertain claims instead of filling gaps with smooth filler. That's slower by a few minutes, but far safer for factual integrity.

- 5

Run editorial review loops

Add at least three checkpoints: angle review, factual review, and final line edit. Different humans can own each gate if your team is large enough. But even solo operators should separate drafting from approval, because distance improves judgment.

- 6

Publish with controlled handoff

Move approved content into the CMS only after metadata, links, schema, and formatting pass a final checklist. Claude Code can prepare the package, but a person should still decide go or no-go. That's how you automate blog writing with AI agents without turning your site into an unattended feed.

Key Statistics

Frequently Asked Questions

Key Takeaways

- ✓Treat automation like an editorial desk, not a content vending machine

- ✓Claude Code stands out when prompts, files, and review rules live in the same system

- ✓Human review should happen at angle selection, fact checks, and final polish

- ✓Quality control needs style guards, source checks, and publishing approvals

- ✓The best AI workflow for writing articles keeps voice variable while the process stays consistent