⚡ Quick Answer

Gemma 3 interpretability research does not literally read a model’s mind, but it does offer a new way to translate internal model activity into human-readable explanations around token prediction. Anthropic and Neuronpedia’s Natural Language Autoencoders are best understood as a practical interpretability tool with real promise and very real limits.

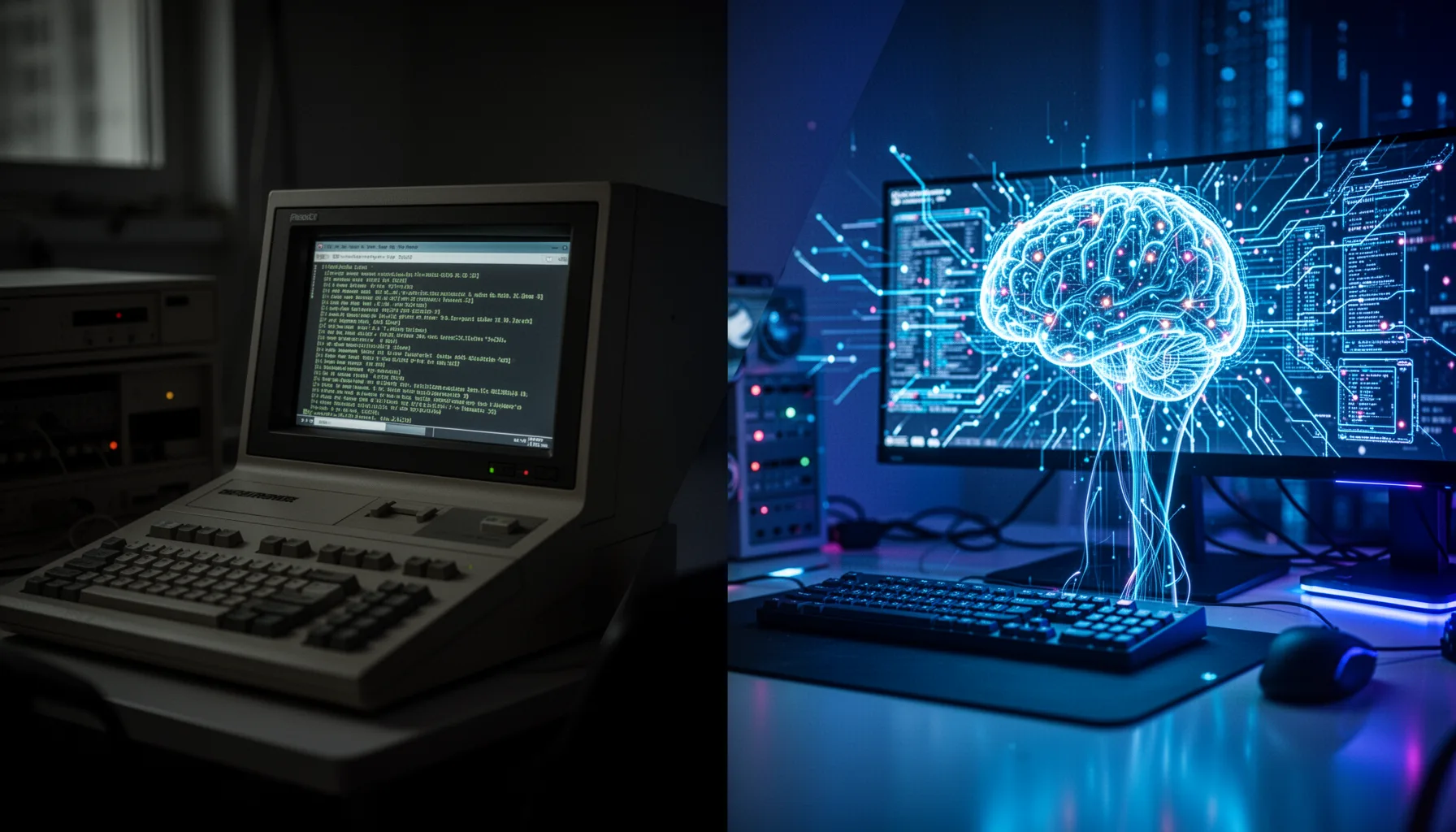

You can now read Gemma 3's mind. That's the headline, anyway. The reality is less magical and a lot more practical. Anthropic's Gemma 3 interpretability work, with Neuronpedia tied in on tooling and access, suggests a new way to turn internal model activity into language people can actually inspect. And if you care about debugging, safety review, or enterprise trust, that's not trivial.

What is Gemma 3 interpretability research actually showing?

Gemma 3 interpretability research suggests researchers can work with Natural Language Autoencoders to produce readable descriptions of internal model states tied to next-token prediction. That's a bigger shift than it sounds. But nobody found a tiny narrator hiding inside the model. Anthropic's framing around NLAs points to a translation layer instead: one model or component captures internal representations, and another renders those representations as text people can inspect. Simple enough. In plain English, the method tries to describe the patterns the model appears to rely on at a given moment during generation. That's useful. Google released Gemma as an open model family, so it's a sensible target for interpretability work because researchers can probe weights and activations more directly than they can with closed systems. We'd argue the real milestone isn't mind-reading. It's making murky intermediate signals somewhat less murky without pretending they're fully legible.

How do Natural Language Autoencoders LLM methods work?

Natural Language Autoencoders LLM methods map hidden internal activations into compact representations, then decode those into natural-language descriptions. Worth noting. Anthropic described NLAs as a pair of language-model-based components, which means the interpretability pipeline itself relies on learned translation rather than a plain lookup table. That gives teams a real leg up. It also opens the door to a fresh kind of error. If the decoder spits out a tidy explanation that sounds right but only partly matches the true internal state, people may trust it more than they should. Not quite. Neuronpedia matters here because interpretability tooling succeeds or fails on inspection workflows, shared visualizations, and reproducible examples, not on papers alone. We'd say the strongest use case is comparative debugging: checking whether the model tracks topic, syntax, refusal behavior, or stray cues token by token. That's far more grounded than claiming we finally know what an LLM is thinking.

How does read Gemma 3 mind explained compare with older interpretability tools?

Read Gemma 3 mind explained lands better when you compare it with older interpretability methods such as sparse autoencoders, attribution maps, and feature visualization. That's a bigger shift than it sounds. Sparse autoencoders try to recover human-meaningful features from dense activations, and Anthropic has already published influential work there for frontier models. Attribution methods do something else. They trace which inputs or components most affected an output, while feature visualization tries to characterize what units or directions respond to. Each method answers a different question. Here's the thing. NLAs look especially interesting because they aim to produce a usable linguistic summary of internal computation near a specific token decision, which could make analysis quicker for practitioners. But they probably won't replace lower-level methods with tighter mechanistic grounding. Think of NLAs as an interface layer for interpretability, not the whole toolbox. That's the more credible claim, and frankly the more useful one.

Why Anthropic Neuronpedia Gemma 3 matters for debugging and safety

Anthropic Neuronpedia Gemma 3 matters because interpretability only changes real practice when researchers and builders can inspect model behavior in specific failure cases. Worth noting. Hallucinations, brittle refusals, prompt injection responses, and policy violations don't usually announce themselves with one obvious cause. Teams need ways to inspect whether a model latched onto bad evidence, over-weighted a misleading phrase, or followed a harmful latent pattern. That's where NLAs could make the difference. In enterprise settings, this kind of tooling may support safety audits, red-team analysis, and eval design by offering a structured view into why the model favored one token path over another. And companies already care: McKinsey's 2024 survey found that 65% of organizations report regular generative AI use, which raises the stakes for governance and debugging. We think this plainly. If your company deploys LLMs in customer-facing workflows, interpretability isn't an academic side quest anymore.

What are the limits of how to understand what an LLM is thinking?

How to understand what an LLM is thinking is still an open problem, and NLAs don't solve it by themselves. That's the catch. Internal representations are distributed, contextual, and often entangled across many dimensions, so any natural-language summary can compress away the very detail that matters most. Not quite. Researchers still need faithfulness checks, adversarial testing, and comparisons against behavioral evals to see whether an explanation tracks the mechanism or merely sounds convincing. Anthropic's own interpretability work has repeatedly made clear that understanding circuits in large models is painstaking and partial, not a one-shot decode. So when people say we can inspect LLM internal thoughts next token prediction directly, we'd urge caution. We can inspect a translated approximation of relevant internal signals. Useful, yes. But not the same as a complete account of machine reasoning.

Key Statistics

Frequently Asked Questions

Key Takeaways

- ✓Natural Language Autoencoders translate internal signals, not private conscious thoughts.

- ✓The work could improve debugging, audits, and enterprise model oversight.

- ✓NLAs complement sparse autoencoders and attribution methods rather than replace them.

- ✓The headline is exciting, but the limits matter just as much.

- ✓For production AI, interpretability is becoming an operations issue, not theory.