⚡ Quick Answer

ItinBench benchmark explained in plain English: it's a new evaluation framework for testing how well large language models plan across several cognitive dimensions, not just narrow reasoning tasks. For builders, that means better signals on which models can actually support planning-heavy agents in messy real-world settings.

ItinBench benchmark explained isn't another benchmark recap. It points to a larger issue: for two years, we've asked large language models to behave like planners while grading them like quiz takers. That's the mismatch. The new arXiv paper, 2603.19515v1, argues that planning spans several cognitive dimensions, and we'd say that's the frame agent builders have needed. Worth noting. If you're building travel agents, workflow copilots, or operations software, a benchmark matters only when it helps you pick models that can actually plan when conditions get messy.

What is ItinBench benchmark explained in plain language?

ItinBench benchmark explained in plain language is a planning evaluation that tests several cognitive dimensions at once instead of squeezing planning into one reasoning score. That's a real shift. Older benchmarks often isolate one task type, like arithmetic reasoning or constrained search, then hint at broader competence than the setup can honestly support. Not quite. That's been a poor habit in AI evaluation. The ItinBench framing suggests a more grounded view: real planning mixes sequencing, constraint handling, memory, adaptation, and tradeoff management. Think of a travel assistant building a multi-city itinerary for Paris, Rome, and Berlin under a budget cap, with layover rules and a user's preference for museums over nightlife. That's not one skill. We'd argue benchmarks that flatten all that into a single pass-fail answer tell buyers almost nothing about production readiness. And the practical draw of ItinBench is simple: it treats planning as a composite capability, which sits much closer to how agents break in the wild.

Which cognitive dimensions does ItinBench measure, and why do they matter?

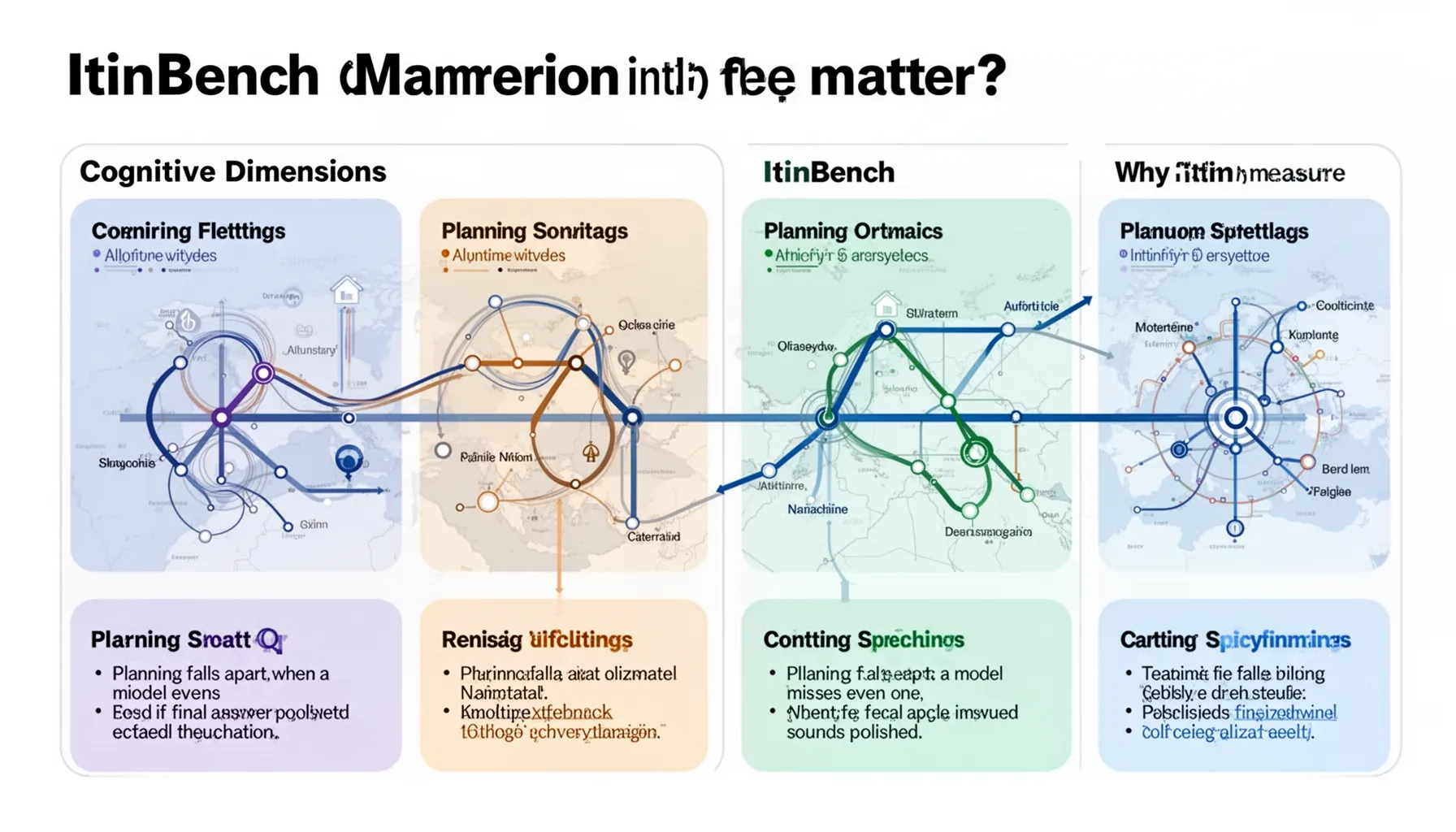

The cognitive dimensions in ItinBench matter because planning falls apart when a model misses even one, even if the final answer sounds polished. That's the part teams can't ignore. In builder-friendly terms, those dimensions likely include goal decomposition, constraint satisfaction, ordering, state tracking, tradeoff evaluation, and adaptation to changing information. That's the taxonomy teams should care about. A model may write elegant prose and still lose the thread on state tracking after six itinerary changes, or meet budget constraints while blowing past time windows. We've seen similar gaps in enterprise pilots with GPT-4-class models and open models like Llama, where polished language hides shaky execution logic. For example, a support workflow agent built on LangChain or CrewAI might handle task decomposition well yet collapse when dependencies shift mid-run. Here's the thing. If a benchmark doesn't separate these dimensions, it conceals the exact failure modes product teams need to inspect. And once the dimensions are labeled clearly, prompt engineering and tool selection get far more precise.

How ItinBench vs existing LLM benchmarks changes model evaluation

ItinBench vs existing LLM benchmarks matters because many older tests fixate on static reasoning or score planning inside toy environments that barely resemble user-facing agents. That's a bigger shift than it sounds. Benchmarks such as GSM8K, MMLU, and even many agent-style tests tell you useful things, but not enough about multi-constraint planning behavior. They weren't built for that. A comparison table would likely place ItinBench closer to operational agent evaluation: broader than math benchmarks, less synthetic than some sandbox planning tasks, and more actionable for deployment choices. Consider HotpotQA or BIG-bench-style tasks. They can reveal retrieval or reasoning patterns, yet they rarely expose how a model handles cascading schedule conflicts across multiple revisions. That's a different muscle. We'd also compare ItinBench with domain-specific planning evaluations in travel or robotics, which often measure success in tighter settings but don't generalize well across enterprise use cases. So if the paper delivers clear dimension-level scoring, it could end up more useful to builders than many better-known leaderboards. Brand recognition in benchmarks is overrated. Diagnostic value is what counts.

How to evaluate AI agent planning with ItinBench scores

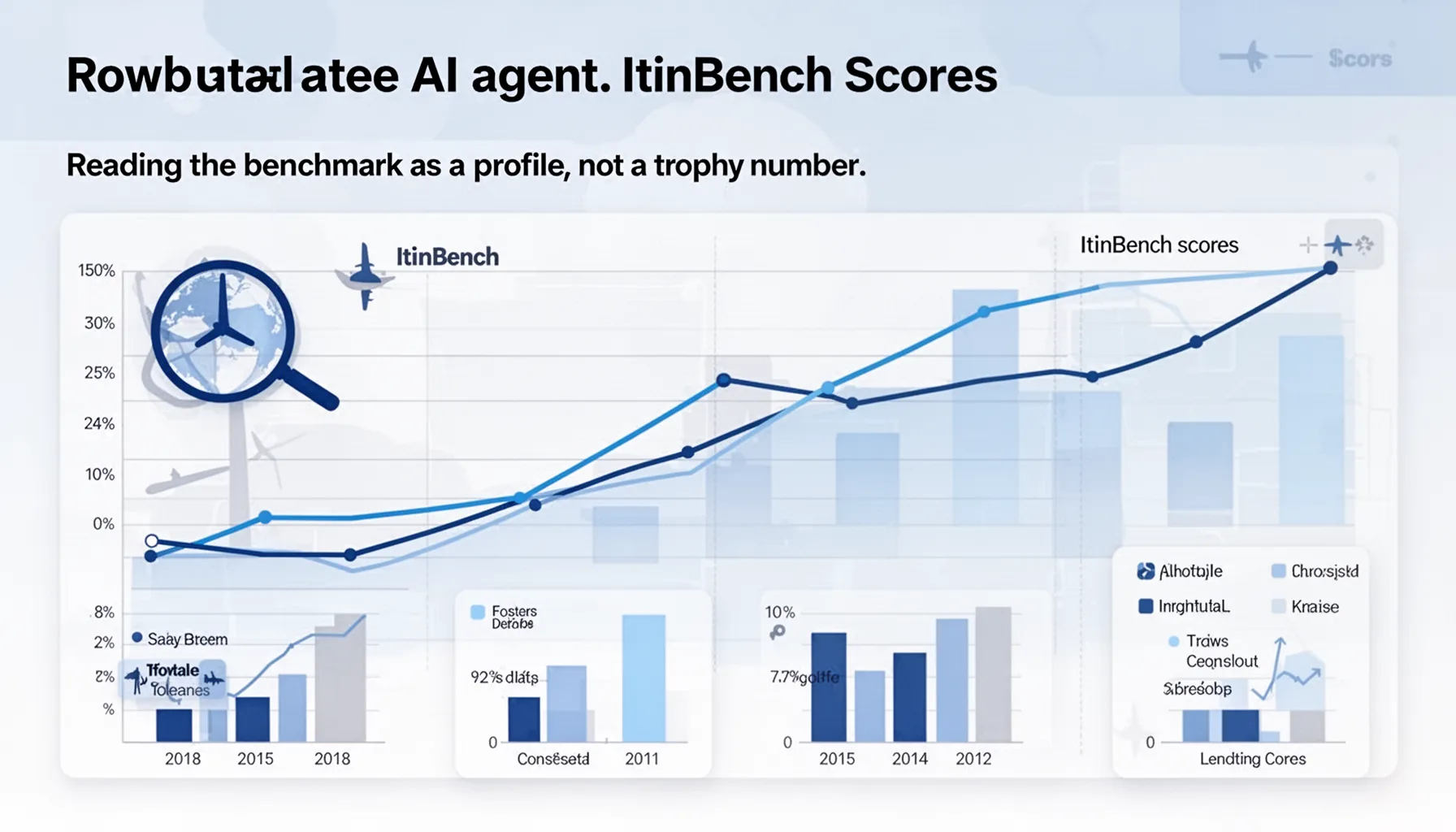

How to evaluate AI agent planning with ItinBench scores starts by reading the benchmark as a profile, not a trophy number. That's the practical lens. One average score can hide whether a model excels at decomposition but fails at revision, or tracks constraints well but makes poor tradeoffs under ambiguity. That's how bad purchases happen. If you're choosing between OpenAI, Anthropic, Google Gemini, or open models served through vLLM, look first at which cognitive dimensions match your workflow's failure cost. A scheduling copilot in healthcare operations, say at Mayo Clinic, needs tighter constraint adherence than creative flexibility. A consumer trip planner might tolerate an odd route now and then, but not repeated date errors or missed visa constraints. We'd argue builders should pair benchmark results with prompt variants, tool-use scaffolds, and small domain-specific tests. And if ItinBench supports that workflow, it becomes more than a paper. It becomes procurement infrastructure.

Step-by-Step Guide

- 1

Define your planning workload

Write down the actual planning task your agent must handle, including constraints, revisions, and success criteria. Too many teams evaluate models on generic reasoning and then wonder why production fails. A travel planner, claims workflow agent, and procurement assistant each stress different planning dimensions. Be specific before you compare scores.

- 2

Map tasks to cognitive dimensions

Break your workload into dimensions such as decomposition, constraint tracking, sequencing, adaptation, and tradeoff handling. This gives you a clear lens for reading ItinBench results. It also prevents teams from overvaluing a model that shines in only one area. Planning is a bundle of skills, not one trait.

- 3

Compare dimension-level benchmark results

Look beyond the headline ranking and inspect where each model wins or loses. A model with the best aggregate score may still be weak on the single dimension your product cares about most. That's common. And it's why dimension-level reporting matters more than leaderboard theater.

- 4

Test prompts and scaffolds against the benchmark

Run the same model with different prompt structures, tool-calling rules, and memory strategies. Planning performance often shifts a lot based on scaffolding, especially for itinerary-style tasks. If ItinBench is well designed, it should surface those differences. That makes it valuable for engineering, not just research.

- 5

Validate with domain-specific scenarios

Use ItinBench as a filter, then run a smaller set of cases from your own product domain. Benchmark wins don't guarantee deployment wins. A logistics agent and a travel planner may share planning mechanics yet differ sharply in tolerance for error. Your internal tests close that gap.

- 6

Choose models by failure cost

Pick the model whose weak spots create the least harm in your workflow, not just the highest average score. In regulated or operations-heavy settings, a cautious model with stronger constraint handling may beat a more eloquent one. This is where benchmark reading becomes product strategy. And it saves teams from expensive surprises later.

Key Statistics

Frequently Asked Questions

Key Takeaways

- ✓ItinBench tries to measure planning as a multi-skill behavior, not one benchmark score.

- ✓That makes it more useful for agent builders comparing models for real task execution.

- ✓The benchmark highlights cognitive dimensions that earlier planning tests often blur or skip.

- ✓Scores should guide prompting and tool-use choices, not just leaderboard bragging rights.

- ✓A good planning model needs structure, memory, tradeoff handling, and error recovery.