⚡ Quick Answer

Model spec midtraining is an intermediate training stage between pretraining and fine-tuning that aims to teach broader behavioral rules before narrow alignment tuning. Anthropic’s core bet is that models generalize alignment better when those rules are learned earlier in the training stack, not bolted on at the end.

"Model spec midtraining" sounds like a small technical phrase. Then you place it inside the training stack, and things get interesting quickly. Anthropic isn't merely naming a fresh alignment technique; it's suggesting a different point where alignment knowledge may actually take hold inside the model. That's a bigger shift than it sounds. And if the idea holds up, it could reshape how teams think about consistency, safety, and instruction-following in production LLMs.

What is model spec midtraining?

Model spec midtraining adds a training step between pretraining and fine-tuning so alignment behavior carries over more broadly. Simple enough. The core idea isn't complicated: if you wait until post-training to teach behavioral rules, the model may copy those rules in familiar settings without really absorbing them across the board. Anthropic's framing matters because it treats the model spec as something to teach before the final rounds of instruction tuning and preference optimization. That's not trivial. In practice, this stage likely exposes the model to behavioral criteria and structured examples earlier, while representations still shift in deeper ways. Similar threads have shown up in nearby research on curriculum design and intermediate adaptation. But Anthropic ties the concept directly to alignment transfer. We'd argue that's more than wording; it points to a claim about where generalization actually gets built. Think of Claude as the concrete example here.

Why does traditional alignment training fail to generalize?

Traditional alignment training often misses on generalization because late-stage tuning can teach surface behavior without rewiring deeper representations enough. Here's the thing. A model may learn that certain refusal styles or helpful tones score well during training, yet still act inconsistently when prompts turn ambiguous, adversarial, or simply sit far outside the tuning distribution. OpenAI, Anthropic, and academic labs have all documented reward hacking, over-refusal, and brittle instruction-following across post-training setups. So the issue isn't that fine-tuning never works. It's that fine-tuning can produce behavior that looks aligned inside narrow eval bands and then slips once you move beyond them. Consider an enterprise support bot. It may follow policy perfectly on templated customer tickets, then wobble when one request mixes legal, technical, and emotional context. That's a real deployment problem. Midtraining aims to shrink that gap by teaching some behavioral patterns earlier, where abstraction seems more likely to stick. We'd say that's worth watching.

How does model spec midtraining fit between pretraining vs midtraining vs fine tuning?

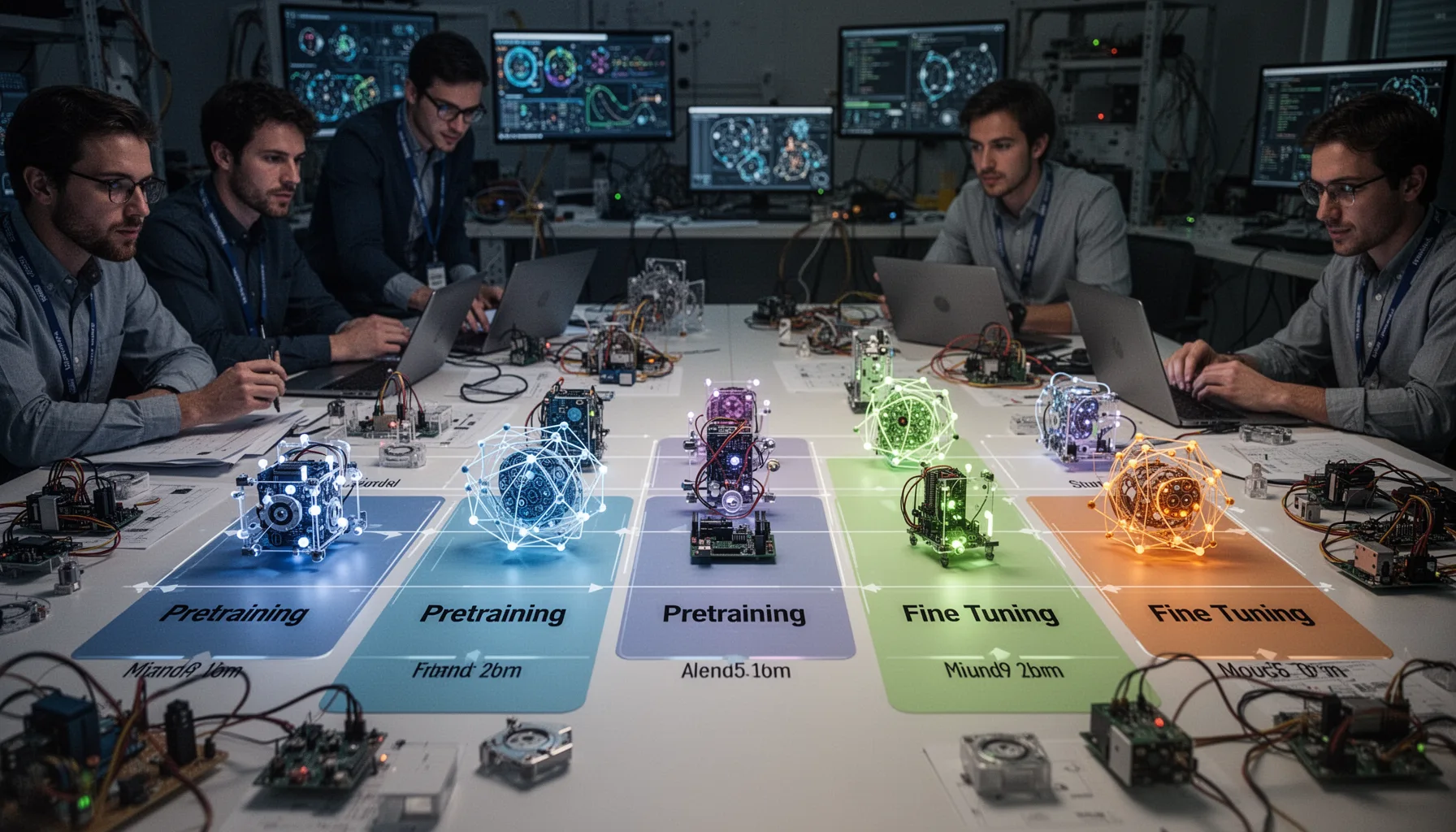

Model spec midtraining sits between pretraining and fine-tuning as an intermediate stage aimed at behavioral generalization, not raw world knowledge or final task adaptation. Not quite the usual setup. Pretraining teaches broad statistical structure from huge corpora. Fine-tuning and RLHF then shape task behavior, persona, and preference alignment. Midtraining, in Anthropic's account, seems to occupy the overlooked middle. That's the interesting part. It may let the model form internal concepts about acceptable behavior before the tighter incentives of post-training push it toward benchmark-friendly answers. DeepMind and other labs have explored intermediate adaptation in other forms, and machine learning research has long suggested that stage order can change which representations survive. Training stacks aren't just pipelines. They're opinionated sequences. And change the sequence, and you may alter what the model generalizes under pressure. Gemini offers a useful named comparison. Worth noting.

How could model spec midtraining affect safety and reliability in production models?

Model spec midtraining could improve production safety and reliability by making behavioral rules hold up more consistently across unfamiliar prompts and domains. That's not a small gain. If the method works, enterprises may see fewer cases where a model behaves well in certification tests but drifts during edge-case interactions. Consistency matters more than benchmark charm when you're deploying systems in healthcare triage, internal copilots, or regulated customer operations. NIST's AI Risk Management Framework stresses repeatability, governance, and measured behavior, and midtraining could support those goals if it cuts post-training brittleness. For example, a legal-assist model might hold firmer boundaries around unverifiable claims even when users ask indirectly or bundle several tasks into one prompt. But teams shouldn't mistake a better training stage for a complete safety answer. You still need evals. And monitoring, red teaming, and policy controls around the model. That's a bigger shift than it sounds.

What should developers and enterprises watch next on model spec midtraining?

Developers and enterprises should watch for evidence that model spec midtraining improves out-of-distribution behavior, not just polished internal demos. Simple enough. The right questions are practical: does the model stay steadier across prompt wording, domain shifts, multilingual use, and long-horizon agent tasks? And can anyone measure those gains independently? We want benchmark design, ablation studies, and deployment reports that isolate the effect of midtraining from later RLHF or constitutional tuning. Anthropic's move probably points to a broader industry turn toward redesigning the training stack itself instead of endlessly tweaking the final alignment layer. That's a smart direction. For enterprise buyers, that means behavior claims may increasingly depend on upstream stages they can't directly see. So vendor evaluation should probe training methodology, not only public benchmark scores. Ask for a concrete case, like how Claude handles multilingual policy prompts. We'd argue that's consequential.

Key Statistics

Frequently Asked Questions

Key Takeaways

- ✓Model spec midtraining sits between pretraining and fine-tuning in the model pipeline.

- ✓Its goal is broader alignment generalization, not merely nicer benchmark behavior.

- ✓Anthropic treats alignment as a representation-learning issue, not a late-stage patch.

- ✓This could shape safety evals, enterprise trust, and claims about behavior consistency.

- ✓Developers should read it as a training-stack shift, not just branding.