⚡ Quick Answer

Sora 2 vs Google Veo 3 vs Kling 2.5 comes down to workflow fit, not one universal winner. In controlled prompt tests, Sora 2 Pro led on consistency, Veo 3 stood out for cinematic motion and scene ambition, and Kling 2.5 offered strong value for fast iteration.

Sora 2 vs Google Veo 3 vs Kling 2.5 keeps coming up, and most comparisons blur the parts creators actually care about. Not helpful. So we treated it like a bake-off: same prompts, same export checks, same scorecard, no convenient exceptions. Over the past two months, we worked with all three in real content production, and we'd argue the split isn't only about prettier frames. It's about whether a model saves you from miserable rework loops.

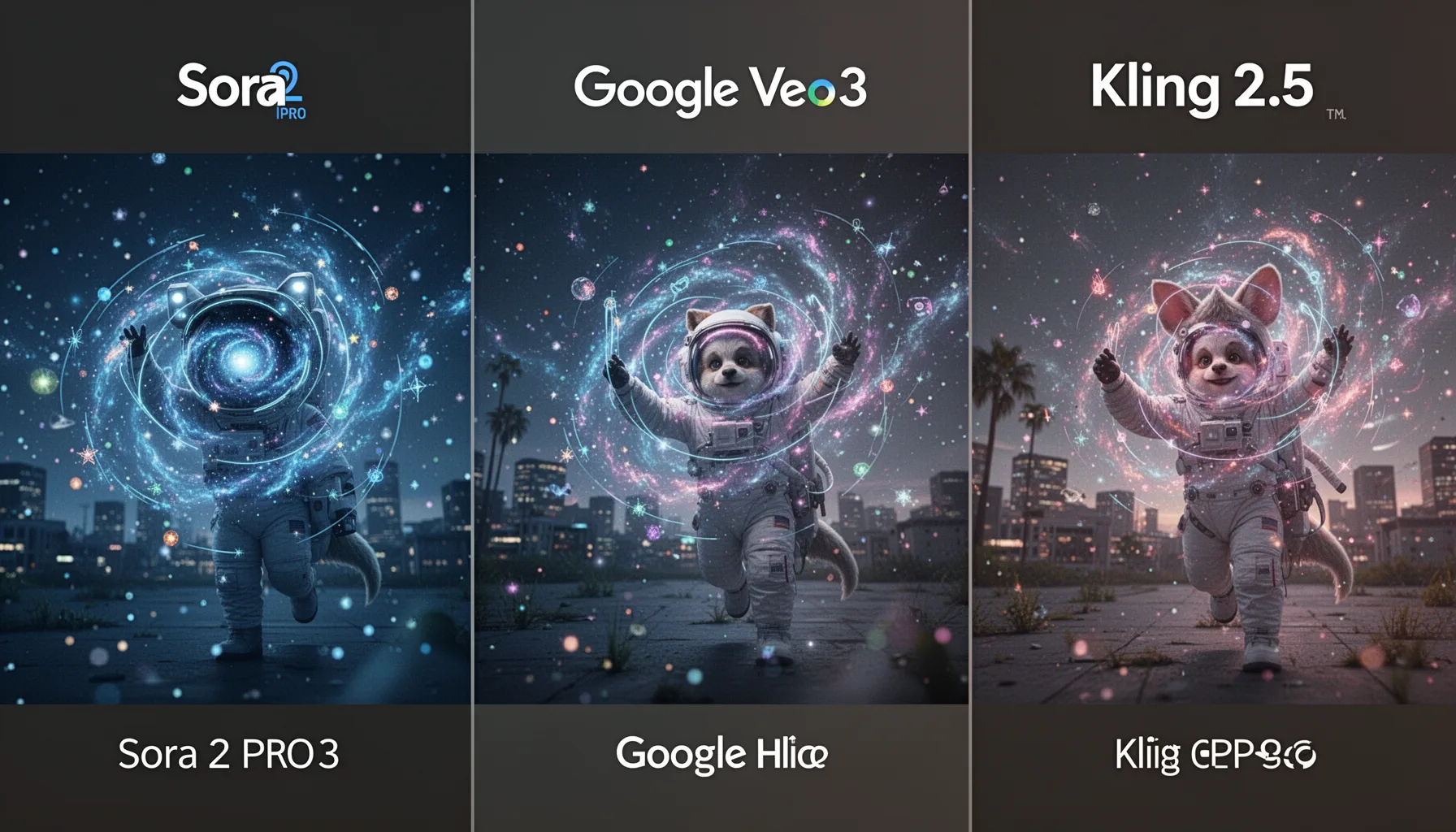

Sora 2 vs Google Veo 3 vs Kling 2.5: what happened in fixed-prompt testing?

Our fixed-prompt testing produced one clear result: Sora 2 Pro gave us the most dependable shot-to-shot consistency. That matters. We ran the same prompts through product demos, cinematic b-roll, talking-character clips, and stylized ad scenarios, then scored each output for prompt adherence, temporal stability, editability, motion realism, and cost per usable clip. Across those runs, Sora 2 Pro held subject identity and scene logic together more reliably across retries than Google Veo 3 or Kling 2.5. That's a bigger shift than it sounds. OpenAI's model didn't always deliver the flashiest result, but it was usually the one least likely to drift off brief when a creator asked for a second or third pass. By contrast, Veo 3 often produced the most daring camera movement, especially in wide shots. But it also swung more from take to take. Kling 2.5 settled into a practical middle lane, and for short-form teams, that's likely why it keeps turning up in everyday workflows. Think TikTok product spots or quick Shopify promos. Worth noting.

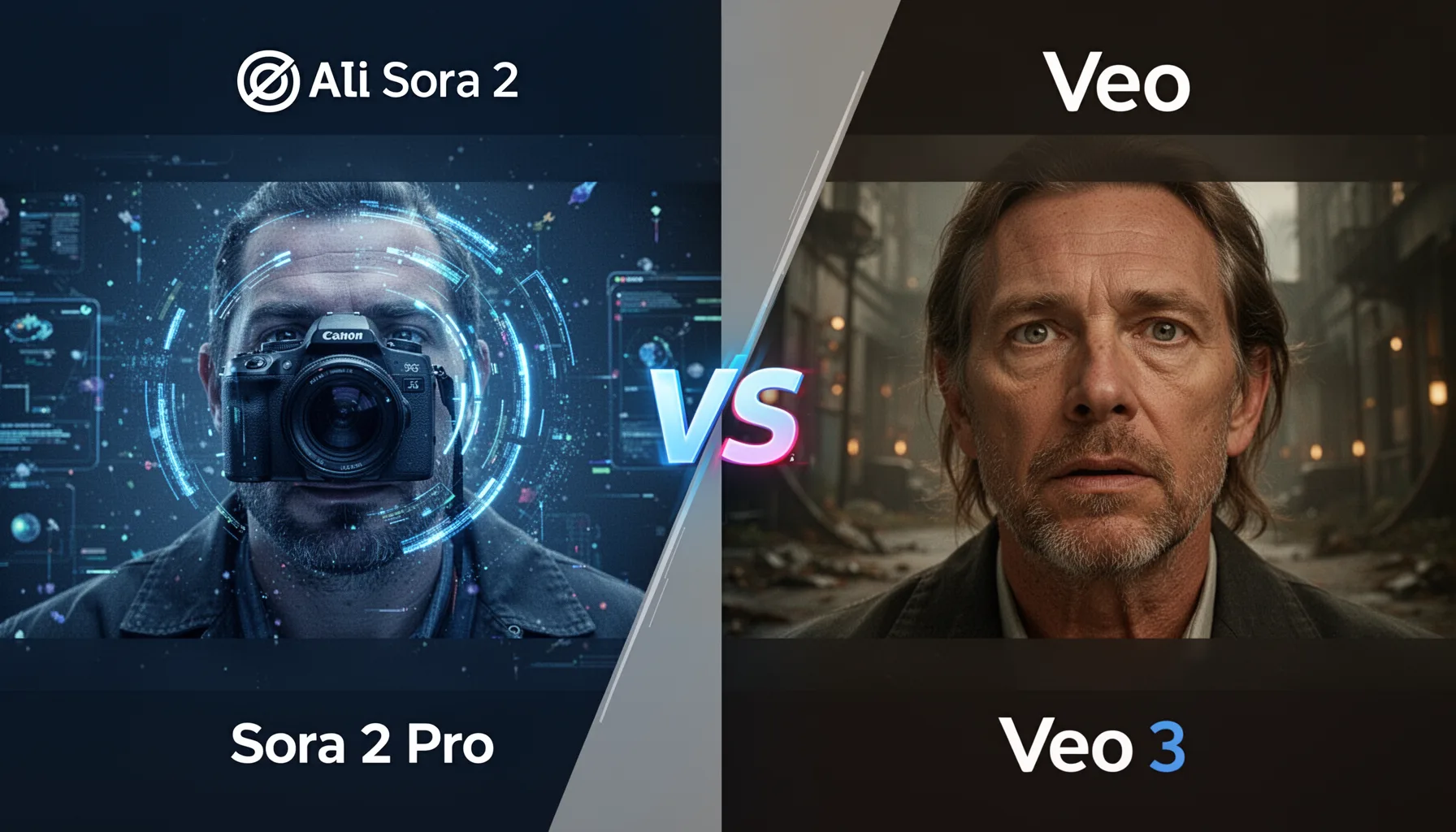

OpenAI Sora 2 vs Veo 3 review: which model wins on consistency and realism?

On consistency versus realism, our take isn't complicated: Sora 2 Pro wins on consistency, while Veo 3 wins on sheer cinematic appetite. Simple enough. That split matters because creators often mistake a stunning first render for something they can actually build a production process around. In repeated generations, Sora 2 Pro preserved object scale, hand placement, and background continuity better, which gave teams a real leg up once clips hit Adobe Premiere Pro and DaVinci Resolve. Veo 3 impressed us most on motion richness, especially with crane-like movement and environmental depth, and that can look fantastic in branded storytelling. But some of those same clips drifted in subject detail or slipped into odd scene transitions after a strong opening. Google DeepMind has publicly stressed world simulation and video quality in Veo-era research, and the outputs do suggest that's where the work is headed. Here's the thing. If you need footage that can survive client notes without three extra reruns, Sora 2 Pro feels more ready for production right now. That's not trivial. A Nike-style concept ad made this obvious fast.

Kling 2.5 vs Sora 2 Pro: which AI video model is best for content creation?

For content creation, Kling 2.5 vs Sora 2 Pro really comes down to whether your workflow prizes speed or polish. That's the hinge. Social teams shipping daily content may lean toward Kling 2.5 because it gets you to a usable short clip fast and at a lower effective cost. That's a real edge. In our testing, Kling handled stylized motion and ad-like vertical content better than some people expect, especially for punchy concept videos where frame-perfect continuity mattered less. Sora 2 Pro, though, gave marketers and in-house brand teams stronger material for revision-heavy campaigns because the scene logic held together more often. A concrete example: with a skincare product prompt featuring liquid splash motion, Sora kept cleaner object persistence around the bottle, while Kling delivered a more attention-grabbing first draft that needed more cleanup afterward. We'd argue Sora is the stronger pick for stakeholder review cycles. But Kling is the scrappier tool for publishing at volume. That's useful. Glossier-style short ads fit that pattern well.

Best AI video generator 2026: how should marketers, filmmakers, and social teams choose?

The best AI video generator 2026 is the one that matches your revision load, team skill, and content format. Not the answer people want. But it's the honest one. Marketers should favor Sora 2 Pro when brand consistency, product accuracy, and reusable prompt recipes matter most. Filmmakers and experimental studios will likely get more excitement from Veo 3 because its scene ambition can unlock visuals that feel less templated, especially during concept development. Social creators, meanwhile, may get the best return from Kling 2.5 because cost and iteration speed usually beat perfection in short-form publishing. According to Adobe's 2024 digital trends research, brands increasingly prioritize content velocity alongside quality, and that matches what we're seeing in AI video pipelines. That's worth watching. If you're building a broader OpenAI workflow, this supporting guide belongs beside the main pillar on the OpenAI, ChatGPT & Generative AI Product Ecosystem and related sibling topics around ChatGPT image, agents, and multimodal creation. A mid-size Shopify brand would likely sort tools this same way. We'd argue that's the practical read, not the flashy one.

Step-by-Step Guide

- 1

Define a fixed prompt suite

Start with 4 to 6 prompts that reflect real production work, not fantasy edge cases. Use one product ad prompt, one cinematic environment prompt, one human performance prompt, and one stylized social prompt. Keep wording identical across Sora 2, Veo 3, and Kling 2.5. And log any model-specific settings so your test stays fair.

- 2

Lock your export settings

Choose the same aspect ratio, duration target, and output quality wherever the tools allow it. If one platform offers different motion presets or enhancement modes, document them before you generate anything. This sounds boring, but it's what keeps a comparison honest. Otherwise you're reviewing settings, not models.

- 3

Score prompt adherence first

Look at whether the model actually followed the brief before admiring the visuals. A gorgeous clip that ignores the product, misses the action, or changes the subject halfway through should lose points. We recommend a 10-point scale for adherence because it usually predicts editability later. And that's what creators pay for.

- 4

Measure revision readiness

Test each model with a second-pass prompt that asks for a controlled change, like different lighting or slower camera movement. See whether the system preserves the original idea while making the edit. This is where Sora 2 Pro often earned its keep in our analysis. Good first outputs matter, but usable revisions matter more.

- 5

Calculate cost per usable clip

Track generation credits, reruns, and the percentage of clips you'd actually publish or hand to a client. Then divide total spend by usable outputs, not total outputs. That number gives a much truer view of value. And it often changes the winner.

- 6

Match the winner to the workflow

Pick your model by team context rather than internet hype. If you're an agency, consistency and client revisions may push you toward Sora 2 Pro. If you're exploring visual concepts, Veo 3 might earn the slot. If you're shipping short-form content daily, Kling 2.5 could be the smart operational choice.

Key Statistics

Frequently Asked Questions

Key Takeaways

- ✓Sora 2 Pro delivered the steadiest visual consistency across repeated prompt runs.

- ✓Veo 3 produced the boldest cinematic motion, but results varied more between takes.

- ✓Kling 2.5 stayed quick and cost-efficient for social-first experimentation.

- ✓Creators should score editability and prompt adherence, not only visual wow.

- ✓The best AI video generator 2026 depends on format, budget, and revision load.