⚡ Quick Answer

The AI agent sketch one part at a time paper describes a multimodal model that draws vector sketches sequentially by parts, rather than producing a whole sketch in one pass. That shift matters because part-wise generation improves control, editability, and procedural visual reasoning in ways standard image generators usually don't.

The real hook in this new arXiv paper isn't just that another model can draw. It's that the AI agent sketches one part at a time. The authors of arXiv:2603.19500v1 describe a system that builds vector sketches sequentially, part by part, with a multimodal language model agent trained through supervised fine-tuning and a multi-turn process-reward reinforcement learning setup. That's a bigger shift than it sounds. Because once a model draws in parts, it starts to resemble procedural visual reasoning, not a standard text-to-image machine. And that could matter for design software, tutoring tools, CAD workflows, and human-AI collaboration.

Why AI agent sketch one part at a time matters beyond image generation

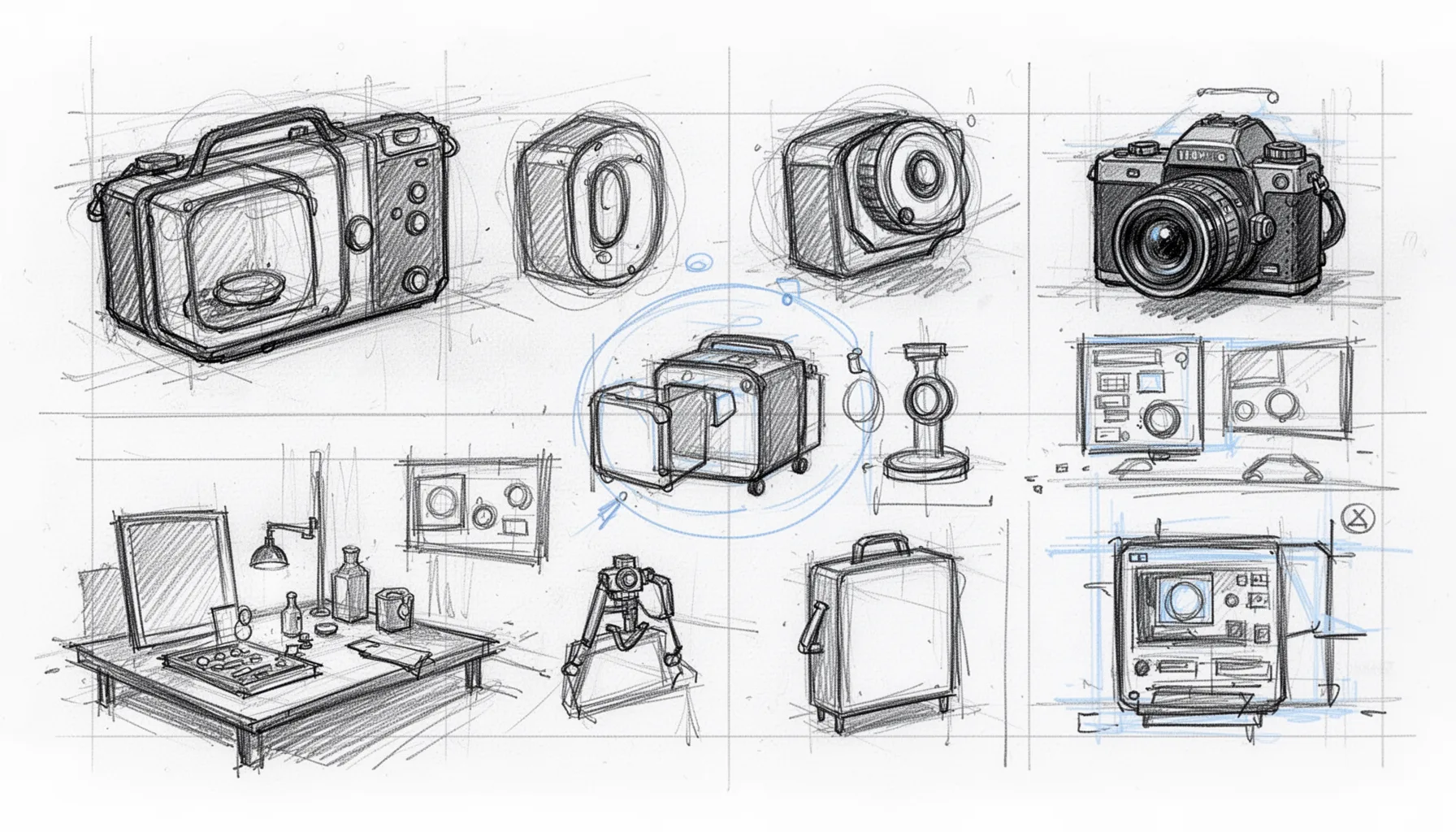

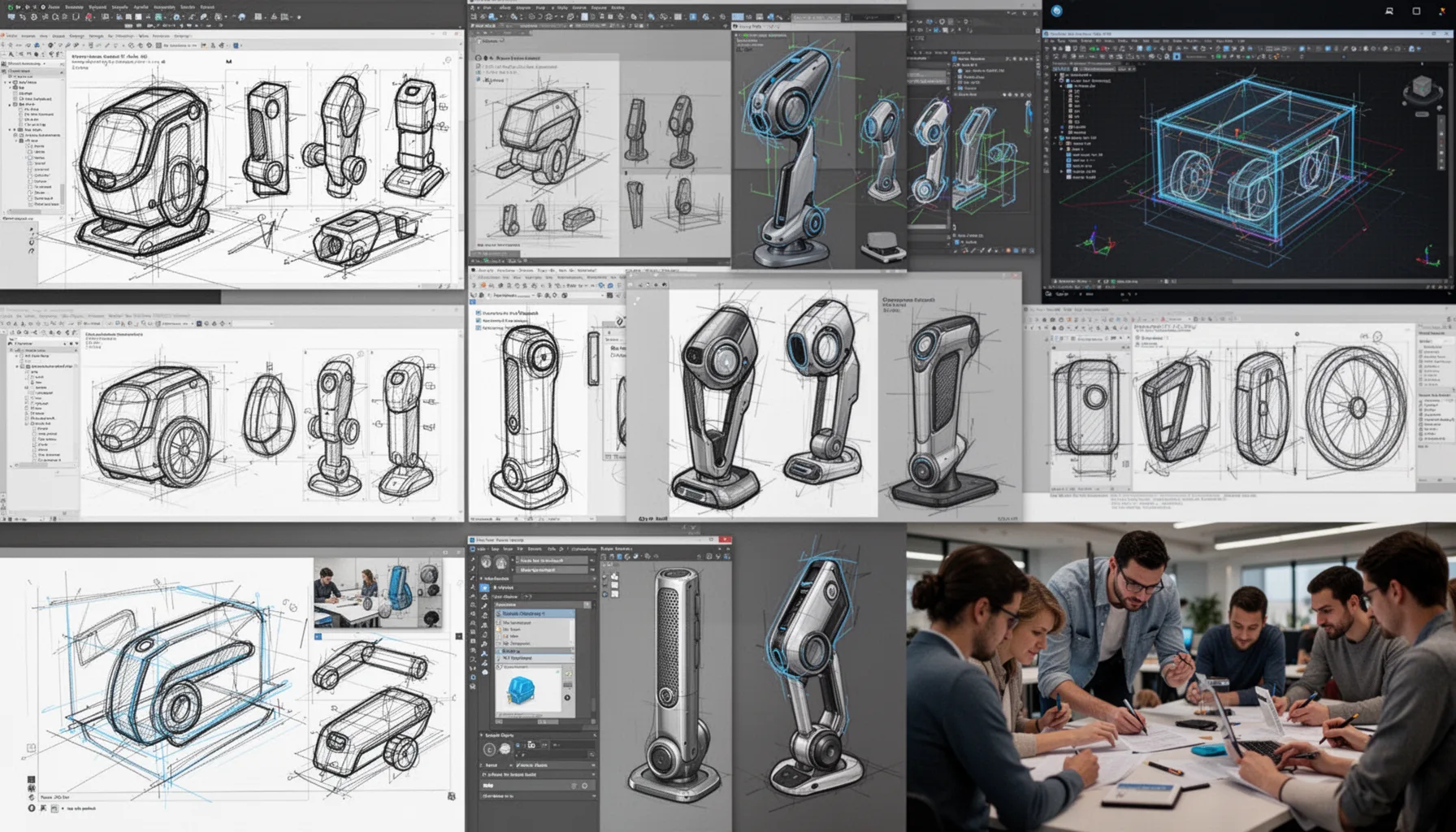

AI agent sketch one part at a time matters because drawing objects in parts gives people control that one-shot image systems usually don't provide. Most image generators, including diffusion-based tools, aim for a finished picture. Vectorization software often traces pixels afterward instead of reasoning about structure from the first stroke. Not quite the same. We'd argue the paper's most consequential idea isn't raw artistic quality. It's procedural decomposition. A bicycle isn't one blob. It's wheels, a frame, handlebars, and a seat placed in relation to each other. Adobe Illustrator users already build this way by hand, and Figma or CAD users think in components rather than pixel mush. According to the abstract, the method combines a multimodal language-model agent with multi-turn process-reward reinforcement learning after supervised fine-tuning, which suggests a direct training focus on intermediate choices. Worth noting. That makes the work feel more relevant to assistive design tools than many flashy image demos. A sketch model that can add a "left wing" or revise a "front leg" on command fits real human workflows far better than a model that redraws the whole scene every single time.

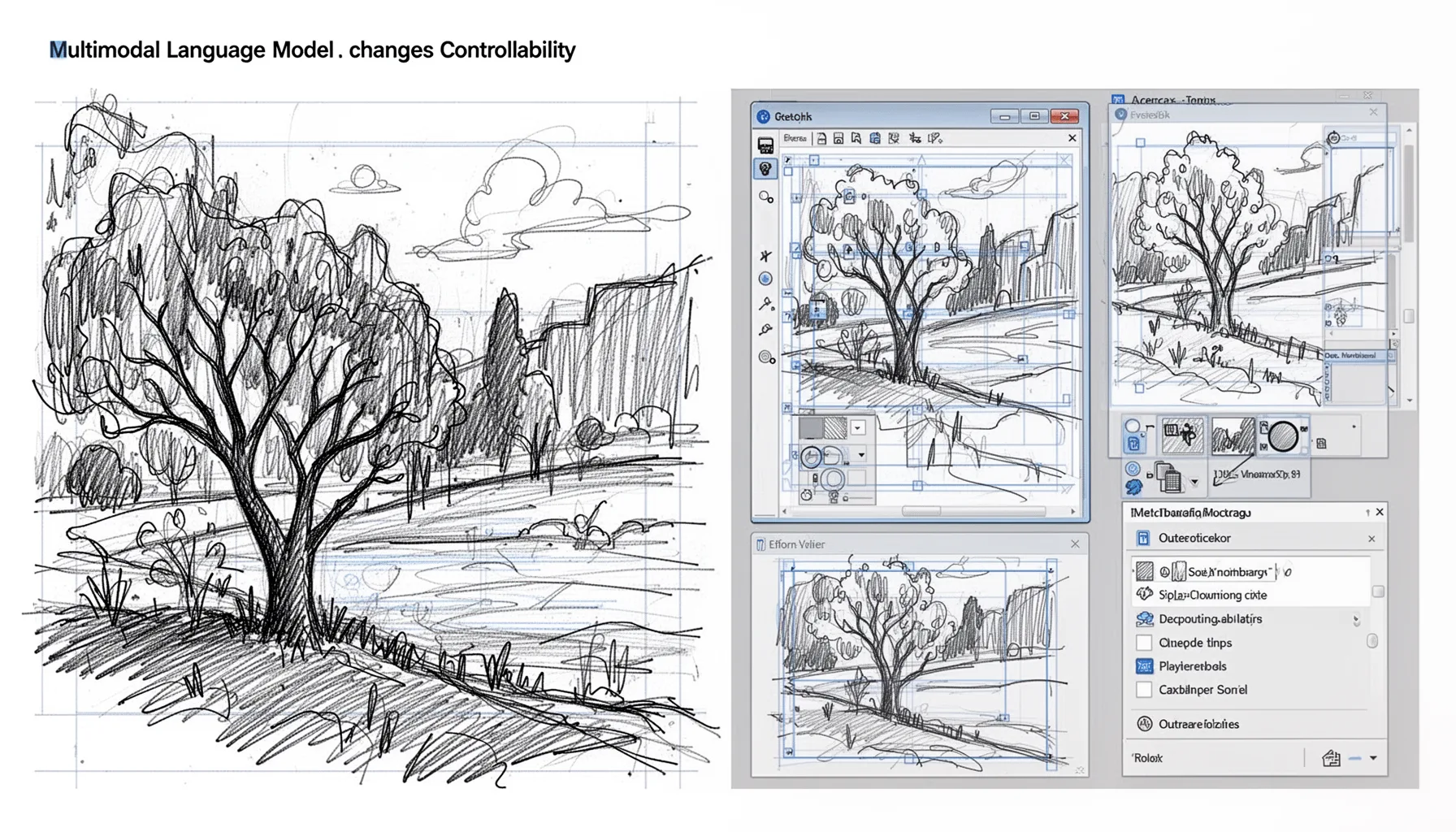

How vector sketch generation with multimodal language model changes controllability

Vector sketch generation with a multimodal language model changes controllability because every drawing action becomes addressable, editable, and easier to line up with user intent. Vector primitives carry structure. Lines, curves, strokes, groups, and layers can all be modified after generation, which is why SVG output remains so useful in illustration and product design. Pixels don't offer that cleanly. The paper's part-based setup suggests the agent can plan a sequence like "draw head, then torso, then limbs." Simple enough. That gives users a natural place for mid-course correction. Think about an education app teaching a child to draw a fox. The system can say, "Let's add the ears next," instead of dropping a complete image with no visible reasoning at all. That's a friendlier pattern. We see the same appeal in CAD-adjacent tooling, where someone may want to isolate one component rather than rerender an entire assembly drawing. And compared with end-to-end vectorization pipelines, which often keep noise from raster inputs, a multimodal agent drawing vector sketches starts from semantic parts instead of mere contour extraction. That's a bigger shift than it sounds.

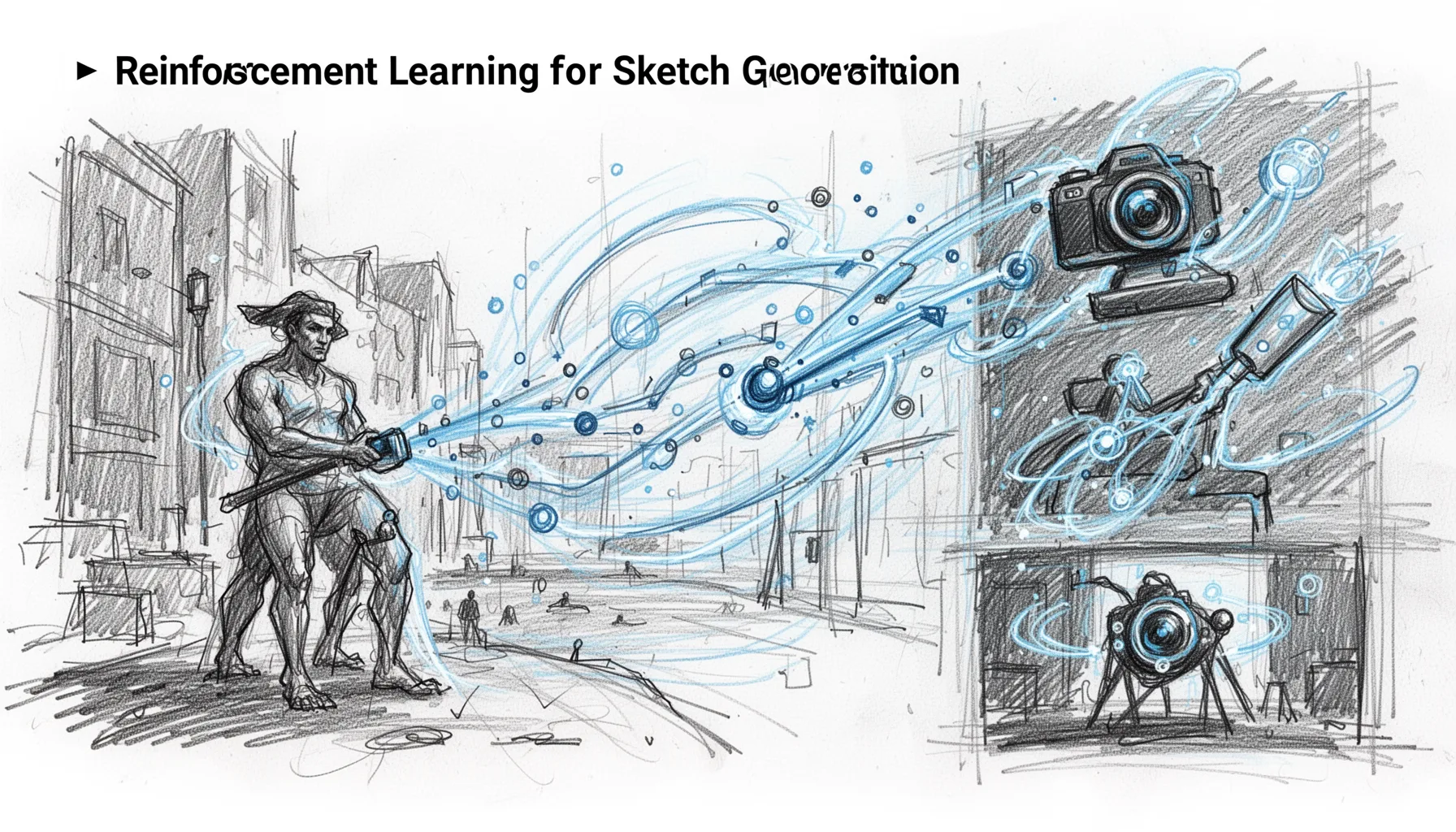

What reinforcement learning for sketch generation adds, and where it still breaks

Reinforcement learning for sketch generation adds a way to reward useful intermediate drawing behavior, though it probably won't cure every structural mistake. The abstract specifically mentions a novel multi-turn process-reward reinforcement learning stage. That implies the training signal cares about the path through the drawing task, not just the finished sketch. That's smart. In agent work, process rewards often improve decomposition and cut down brittle one-step guesses, and we've seen similar logic in reasoning research from OpenAI, DeepMind, and academic teams working on chain-of-thought verification. For a sketch agent, that could mean rewarding the right part order, sensible placement, or adherence to text constraints across turns. Here's the thing. A failure case isn't hard to picture: drawing a cat with four legs, then attaching two to the wrong side of the torso, or giving a horse wings because the model drifted from an earlier instruction. RL may reduce some of that drift. But it'll still likely stumble on global proportion, occluded parts, and rare object classes where data runs thin, much like older sketch datasets such as Google's Quick, Draw! often overrepresent simplified, stereotyped forms. Worth watching.

How part based sketch generation AI could affect design, education, CAD, and collaboration

Part based sketch generation AI could affect real products because editable, sequential sketches match how people already create, teach, and revise drawings. In design software, a part-wise agent could generate a product concept as separate vector groups. Then a designer could delete only the handle of a mug or swap the wheelbase of a scooter concept. That's practical. In education, the same setup supports step-by-step instruction, similar to how Procreate tutorials or Khan Academy-style lessons break drawing into stages. For CAD and technical illustration, part sequencing could support diagram generation where labels and component boundaries matter more than photorealism. Autodesk and Dassault Systèmes users already live inside structured object hierarchies, so a sketching agent that understands parts fits that mental model better than a raster generator ever will. And human-AI collaboration improves when the system exposes its reasoning through actions instead of only outputs. Here's the thing: when users can say "keep the frame, redraw the fork," trust goes up because the model becomes editable, not mysterious. That doesn't make it production-ready overnight. But it does make the research far more relevant than many academic drawing papers. We'd argue that's the real commercial angle.

Step-by-Step Guide

- 1

Read the paper as an agent paper first

Start by treating the work as procedural reasoning research, not only as sketch synthesis. The sequence of actions matters as much as the final image. That lens makes the reinforcement learning stage and part decomposition much easier to understand. And it highlights why this could matter for future interactive tools.

- 2

Map parts to real user controls

List the controllable elements a product team would expose in an interface: head, handle, wheel, wing, label, or connector. Then ask whether the model can generate, revise, or remove each one independently. If it can't, the system isn't yet delivering the promise of part-wise interaction. That's the practical test.

- 3

Compare against one-shot generators

Put the method beside a text-to-image system and a raster-to-vector pipeline. Measure how easily a user can make a local correction without restarting the whole drawing. This comparison matters because many demos look good until editing begins. And editing is where part-based systems should win.

- 4

Inspect failure cases by part order

Review examples where the model chooses the wrong sequence, misses a component, or drifts after several turns. Sequence errors often reveal more than final-output scores do. A dog drawn without ears tells you something, but a dog that gains a second tail halfway through tells you much more. That's where the agent framing becomes concrete.

- 5

Test collaborative drawing workflows

Run small user tests where people co-draw with the model instead of just prompting it once. Ask users to interrupt, revise, and redirect the sketch midway. If the system stays coherent, it has real collaborative value. If it collapses after edits, it still behaves more like a generator than an agent.

- 6

Translate results into product requirements

Turn the paper's claims into software needs such as layer separation, SVG export, revision memory, and part-level undo. Research value rises when teams can connect it to interface decisions and evaluation metrics. This is especially useful for design, edtech, and CAD builders. And it keeps the analysis grounded.

Key Statistics

Frequently Asked Questions

Key Takeaways

- ✓Part-wise sketching gives users finer control than one-shot image generation or auto-vectorization workflows.

- ✓The paper pairs supervised tuning with process-reward reinforcement learning across multiple drawing turns.

- ✓That makes the model feel more like a planning agent than a plain sketch generator.

- ✓For design tools and education, editable vector parts are more useful than flat pixels.

- ✓The method still struggles with spatial consistency, rare objects, and long multi-part compositions.