⚡ Quick Answer

Claude Code recursive self writing describes a workflow where AI tools generate prompts, propose patches, run tests, and iterate on results within bounded loops. It does not mean Claude independently rewrites itself without human controls, because repositories, CI gates, permissions, and rollback policy still decide what ships.

Claude Code recursive self writing is one of those phrases that outruns the engineering underneath it. It sounds enormous. And yes, in one narrow lane, something real is happening: coding agents can propose code, run tests, inspect failures, and sketch the next patch with very little human typing. But that headline skips the consequential part. What we’re actually seeing in 2026 isn’t software waking up and authoring itself from scratch. It’s a recursive toolchain. Wrapped in human rules, CI systems, repository permissions, and deployment policy.

What does Claude Code recursive self writing actually mean?

Claude Code recursive self writing usually means the system joins repeated coding loops, where model output feeds the next development step. That distinction isn't trivial. Too many posts mash together code generation, autonomous task execution, and true self-modifying systems, even though software engineers treat those as different risk categories with different controls. In practice, the loop tends to look familiar: a developer or supervisor agent frames the task, Claude Code suggests edits, the tool runs tests or linters, failure output comes back as fresh context, and the model drafts another patch. Anthropic’s coding tools, along with agent setups from GitHub Copilot Workspace, Cognition, and Sourcegraph, all suggest this direction, but none remove the need for repository-level access policy. We'd argue the phrase “AI writing itself” sounds catchy and still gets the story wrong. Worth noting. Take a Python service: Claude Code updates unit-tested business logic after a failing pytest run, yet a GitHub branch protection rule still blocks the merge until human review or required checks clear.

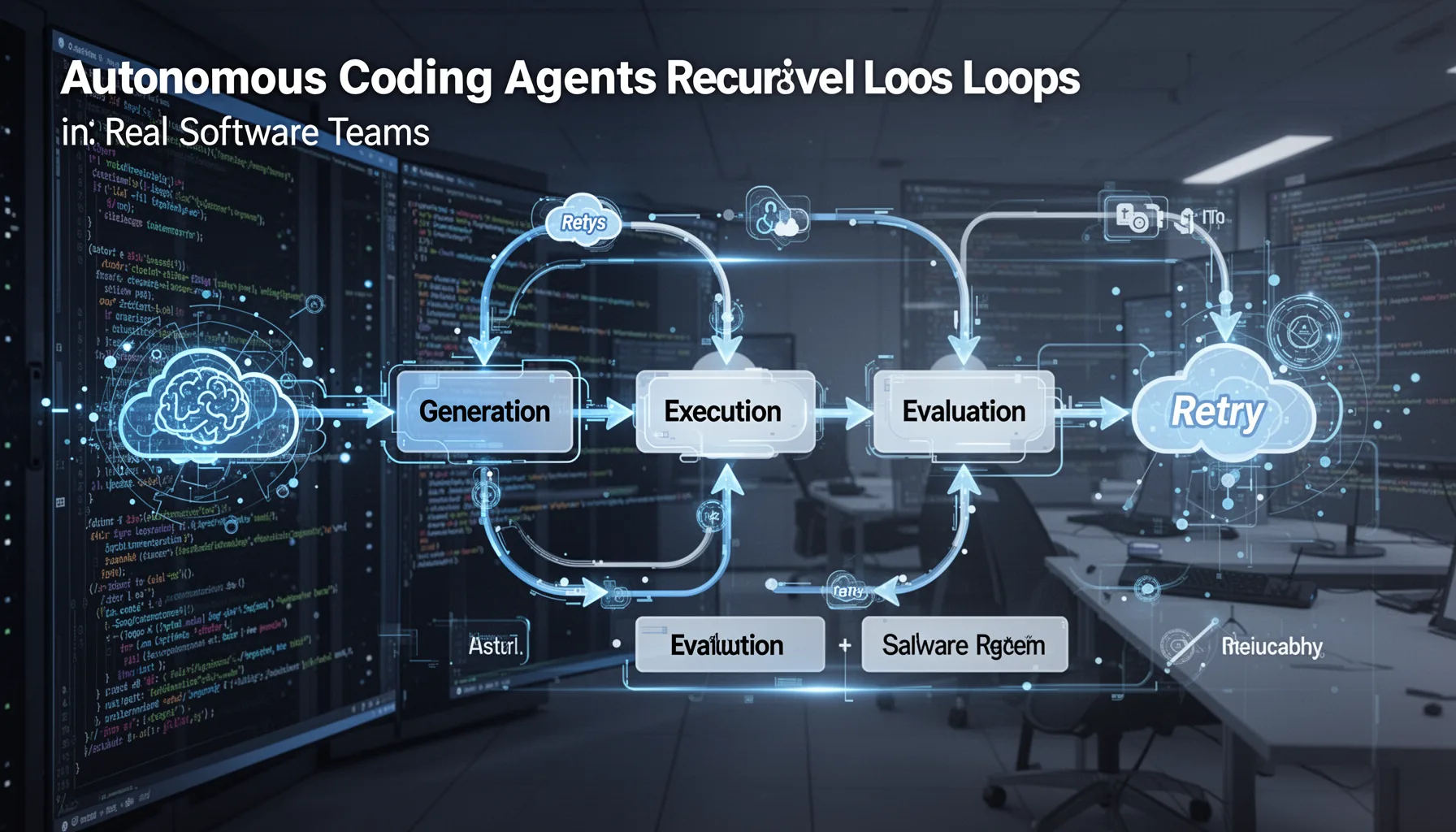

How autonomous coding agents recursive loops work in real software teams

Autonomous coding agents recursive loops work by chaining generation, execution, evaluation, and retry inside a controlled software delivery pipeline. Here's the thing. The recursion is procedural, not mystical. A modern engineering stack already has most of the machinery: issue trackers, test harnesses, CI runners, staging environments, observability dashboards. And now agents thread those pieces together faster than many teams expected. According to GitHub’s 2024 developer research context, a large share of developers already relied on AI coding assistance weekly, but assisted completion is a very different thing from unattended repository mutation. Claude Code gets more useful when the environment is instrumented well: clear task scopes, deterministic tests, typed interfaces, and readable logs give the model better feedback on each loop. And that's why mature teams get a real leg up over chaotic ones. We'd argue that's a bigger shift than it sounds. At companies like Block or Shopify, where internal developer platforms enforce standard CI and deployment controls, agentic coding can probably speed up maintenance work far more safely than in a startup repo with weak tests and broad admin access.

Is AI started writing itself software development hype or substance?

The claim that AI started writing itself software development has a kernel of truth, but most of the viral phrasing stretches past the evidence. Not quite. Software can now generate support code for the same pipelines that evaluate later model-generated code, and that recursive setup is new enough to deserve close attention. Yet the practical result still turns on whether teams define approved actions, review thresholds, and rollback triggers before they give agents room to operate. A self-improving vibe can appear when Claude Code drafts a test, relies on that test to justify a patch, and then proposes a prompt template for similar work later. But we shouldn't confuse looped assistance with open-ended self-authorship. Simple enough. One example: an internal platform team might let Claude Code update YAML CI configs and helper scripts after repeated build failures, but SRE approval remains the gate before any production deployment policy changes land. My view is pretty plain. There's real acceleration here, though a lot of the loudest commentary feels like automation theater dressed up as inevitability.

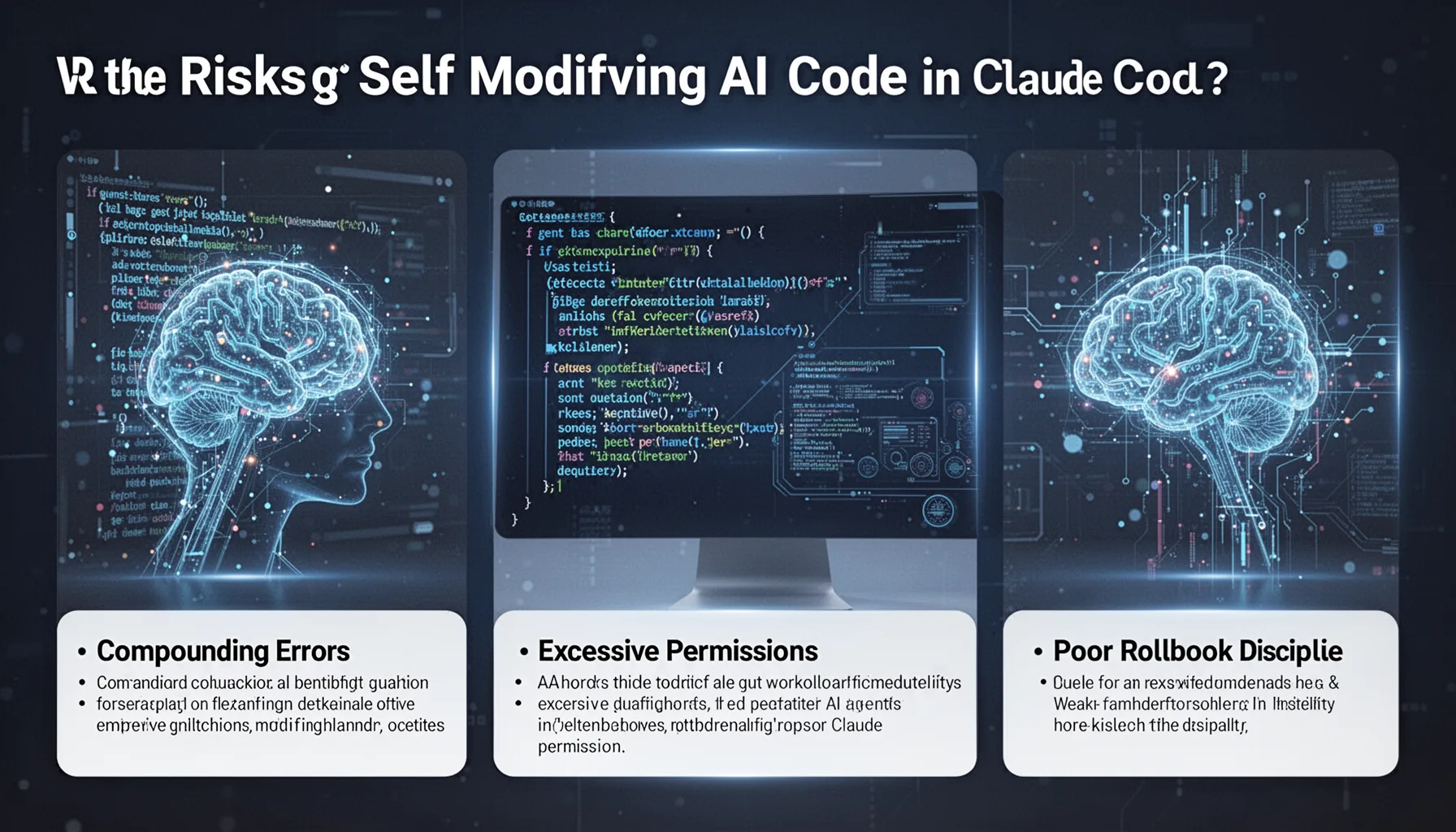

What are the risks of self modifying AI code in Claude Code workflows?

The risks of self modifying AI code in Claude Code workflows come from compounding errors, excessive permissions, weak observability, and poor rollback discipline. That's the serious part. If an agent can edit code, update tests, and shape its own evaluation environment, then a weakly governed loop can reward passing checks over preserving actual system intent. We've seen a version of this in ordinary software already, where teams overfit to metrics and miss production regressions. Agents can compress that mistake into minutes. Standards and controls make the difference: SOC 2 change-management practices, least-privilege IAM, signed commits, protected branches, and deployment approvals all shrink blast radius. OpenAI, Anthropic, and Google DeepMind have all framed agent safety partly as a tooling problem, not just a model problem, and we think that's the right call. Worth watching. Consider a fintech app where Claude Code updates fraud-detection thresholds and related tests: if the test fixture is flawed, the loop may happily validate a bad patch unless independent monitoring catches a spike in false negatives after redeployment.

What is the future of recursive AI programming with Claude Code 2026 autonomous coding trends?

The future of recursive AI programming with Claude Code 2026 autonomous coding trends points toward narrower autonomy, stronger policy engines, and much better audit trails. That's where the market seems to be leaning. The products that win probably won't be the ones promising total self-writing software, because enterprises buy control, logs, and rollback confidence before they buy spectacle. We expect coding agents to become standard for maintenance work like dependency updates, regression fixes, flaky test triage, and documentation refreshes, especially when tasks sit inside measurable acceptance criteria. Anthropic, GitHub, JetBrains, and Atlassian all have reasons to push deeper into this model, and standards bodies such as NIST will keep shaping how teams document AI-assisted software changes. And this supporting piece should sit beside the cluster pillar on topic ID 349, which covers the broader shift in Claude agentic computer control and autonomous coding, plus sibling angles on tooling and operational risk. We'd argue that's the real story. Claude Code recursive self writing will matter most not as a science-fiction milestone, but as a disciplined engineering loop that turns software delivery into a tighter conversation between models, tests, and humans.

Step-by-Step Guide

- 1

Map the loop boundaries

Start by writing down where the agent can act and where it must stop. Define whether Claude Code can only edit a branch, whether it can run tests, and whether it can open pull requests. Because if the boundary is fuzzy, the recursion gets fuzzy too. Teams that do this early usually avoid the worst policy mistakes.

- 2

Constrain repository permissions

Give the agent the least privilege it needs for the task. Restrict write access to specific repos, folders, or branch patterns, and require protected branches for anything tied to production systems. GitHub branch rules, signed commits, and CODEOWNERS are boring, but they’re the difference between speed and chaos.

- 3

Instrument test and evaluation feedback

Feed the loop with high-quality signals like unit tests, linting, type checks, integration results, and clear logs. If your test output is noisy or flaky, the agent will optimize against bad evidence. We’d argue that observability is more valuable than a bigger context window once coding loops become semi-autonomous.

- 4

Insert human review gates

Require people to approve risky classes of changes such as security logic, billing code, IAM policy, or deployment config. Not every diff needs equal scrutiny, and that’s fine. But someone should still own the judgment calls where business intent matters more than passing tests.

- 5

Track rollback and drift

Set explicit rollback triggers before you let recursive loops touch live services. Monitor deployment health, error rates, latency, and user-impact metrics so you can reverse quickly if an apparently valid patch degrades reality. A clean rollback path keeps experimentation safe enough to be useful.

- 6

Document agent behavior

Keep a searchable record of prompts, tool actions, test results, approvals, and final outcomes. This creates auditability for compliance and gives teams real data on where automation actually saves time. It also helps you separate meaningful gains from flashy demos when reporting to leadership.

Key Statistics

Frequently Asked Questions

Key Takeaways

- ✓Claude Code recursive self writing is mostly bounded automation, not fully independent self-modification

- ✓The real loop is prompt, patch, test, review, deploy, then monitor and repeat

- ✓Human gates still matter through CI checks, permissions, code review, and rollback controls

- ✓Autonomous coding agents speed narrow engineering tasks more than dramatic headlines suggest

- ✓The biggest risks come from policy gaps, not magical self-aware code loops