⚡ Quick Answer

The vision language model physical reasoning benchmark tests whether VLMs understand structural, causal, and procedural constraints rather than only generating convincing images. It matters because a model that looks realistic can still fail badly in robotics, digital twins, and scientific workflows.

"Vision language model physical reasoning benchmark" is the phrase to watch in this new paper. Not image quality. The arXiv paper asks a blunt question the multimodal market has mostly sidestepped: can VLMs construct the real world, or do they merely imitate it convincingly? That's a tougher bar than many leaderboards admit. A model can draw a believable bridge, machine part, or lab setup while quietly breaking the physics that would make it usable.

What does the vision language model physical reasoning benchmark actually measure?

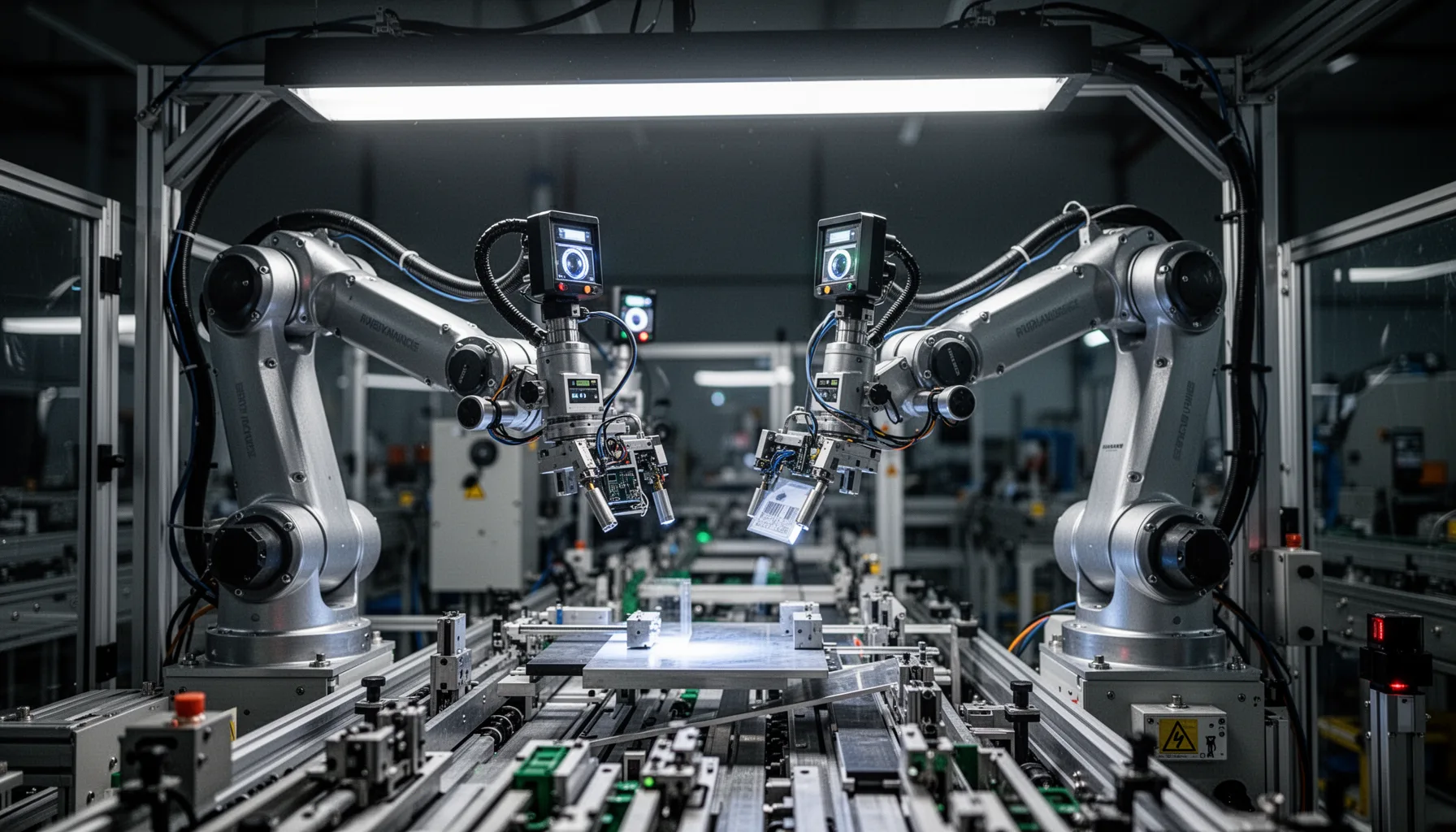

The vision language model physical reasoning benchmark checks whether a model respects real-world structural, causal, and procedural constraints during generation and reasoning. That's the shift. Most multimodal evaluations still reward perceptual realism, caption alignment, or broad reasoning fluency, yet those metrics don't ask whether an output could exist, function, or actually be assembled in the physical world. That's consequential. The paper's framing matters because physical reasoning in vlms isn't some cosmetic add-on; it's a different standard of competence altogether. Take a generated factory layout from Siemens-style planning software. It can look polished while putting equipment into impossible maintenance positions or breaking process-flow dependencies. Not a minor miss. In manufacturing or scientific visualization, that means the model can impress a reviewer while quietly undercutting the workflow it's supposed to support. We'd argue this benchmark matters because it stops confusing visual plausibility with world understanding, and that mix-up has shown up in far too much multimodal coverage.

How far are vlms from real world understanding in practice?

How far are vlms from real world understanding? Probably farther than recent demos make it seem. The strongest current VLMs from firms like OpenAI, Google DeepMind, and Anthropic can produce strikingly coherent multimodal outputs, but coherence alone doesn't prove grounded reasoning about load, support, sequence, containment, or causality. Here's the thing. In robotics, the gap appears when a model describes a task accurately yet misses grasp constraints or object stability. In digital twins, it pops up when the scene looks right while the underlying system relationships are quietly impossible. NVIDIA's Omniverse work has sparked serious interest in simulation-backed AI, and that's a bigger shift than it sounds. Benchmarks like this arrive at the right moment. They reveal the difference between scene narration and scene understanding. And our view is simple: if a model can't preserve physical feasibility under generation pressure, teams shouldn't trust it in embodied or industrial settings, no matter how slick the demo looks.

Why the vision language model physical reasoning benchmark matters for robotics and manufacturing

The vision language model physical reasoning benchmark matters for robotics and manufacturing because these sectors pay for feasibility, not vibes. Simple enough. An industrial robot cell, warehouse path plan, or assembly instruction set has to obey geometry, sequencing, collision limits, and tool constraints. A VLM that only produces visually plausible plans can introduce hidden failure points that people catch only after time and money are already gone. Boston Dynamics, ABB, and Siemens all work in environments where procedural and physical correctness beats stylistic realism every single time. Worth noting. That's why this benchmark has direct deployment value. It can give teams a real leg up when they test whether models understand order of operations, support relationships, and state changes before those models influence layout planning or instruction generation. And compared with generic multimodal benchmarks, this one speaks in terms real operators actually care about: can the output work, not merely can it impress?

How to use a real world reasoning benchmark ai teams can trust

A real world reasoning benchmark ai teams can trust should sit inside model evaluation before deployment, procurement, and governance reviews. That's the practical takeaway. If you're assessing generative reasoning benchmark vision language models for a robotics stack, a design assistant, or a scientific visualization tool, test them on failure classes tied to your domain: support violations, impossible assembly orders, causal discontinuities, and unsafe transitions. Then compare outputs against simulation, rule engines, or expert judgment instead of leaning on human aesthetic approval alone. For example, a pharmaceutical lab visualization assistant might generate a convincing procedure diagram that quietly swaps contamination-sensitive steps. Merck would care. A domain rubric should catch that immediately. This is where topic ID 394's broader pillar on agents and multi-agent systems becomes useful. The benchmark can act as a sibling control layer for any system where multimodal agents feed planning, simulation, or embodied action. We wouldn't ship a VLM into a physical workflow without this check in place.

Step-by-Step Guide

- 1

Define physical success criteria

Start by naming what counts as physically correct in your setting. That could mean structural support, collision avoidance, procedural order, containment, or material compatibility. Make those criteria explicit before you compare models. Otherwise teams drift back to judging outputs by polish.

- 2

Build domain-specific test cases

Create prompts and scenarios based on real tasks from robotics, manufacturing, design, or science. Synthetic tests help, but field-shaped examples reveal more. Use actual assembly diagrams, workspace layouts, or step sequences where failure has a known signature. That's how you catch useful errors.

- 3

Score structural and causal validity

Evaluate whether generated outputs preserve support, connectivity, sequence logic, and cause-effect consistency. Don't stop at semantic matching. A model can mention the right objects while arranging them in impossible ways. Your scoring needs to punish that sharply.

- 4

Compare against simulation or rules

Run outputs through physics engines, CAD checks, workflow validators, or expert-authored rulesets. This creates an external source of truth. For embodied systems, pair model scoring with simulation replay. For industrial use, connect checks to standard operating procedures and safety constraints.

- 5

Separate realism from feasibility

Review visual quality and physical correctness as different dimensions. This sounds obvious, but many teams still blur them. A beautiful but impossible output should fail. Once that norm is set, model selection gets clearer fast.

- 6

Feed results into deployment gates

Use benchmark performance to decide where a VLM can and cannot operate. A model that passes visualization support may still fail planning support. Set access levels accordingly. That protects teams from broad deployment based on narrow strengths.

Key Statistics

Frequently Asked Questions

Key Takeaways

- ✓The vision language model physical reasoning benchmark asks whether VLMs understand reality, not just appearance

- ✓Physically plausible output matters more than pretty images in robotics and manufacturing use cases

- ✓A realistic-looking generation can still break causal, structural, or procedural rules of the world

- ✓This benchmark is useful because it turns multimodal hype into measurable deployment risk

- ✓Teams should test VLMs on feasibility, constraint satisfaction, and sequence correctness before rollout