⚡ Quick Answer

Claude architecture patterns work best when teams choose the simplest architecture that reliably meets accuracy and workflow needs. Start with a simple LLM call, move to RAG when fresh proprietary knowledge becomes essential, and adopt agents only when the task truly needs multi-step tool use and stateful decisions.

Claude architecture patterns look tidy on vendor slides. Real production work doesn't. Teams often leap from one prompt to retrieval, then sprint toward agents, before they've even proved the user problem calls for that much machinery. We'd argue the wiser route starts slower: pick the lightest setup that hits your accuracy target, then add components only when the data points there. That's a bigger shift than it sounds.

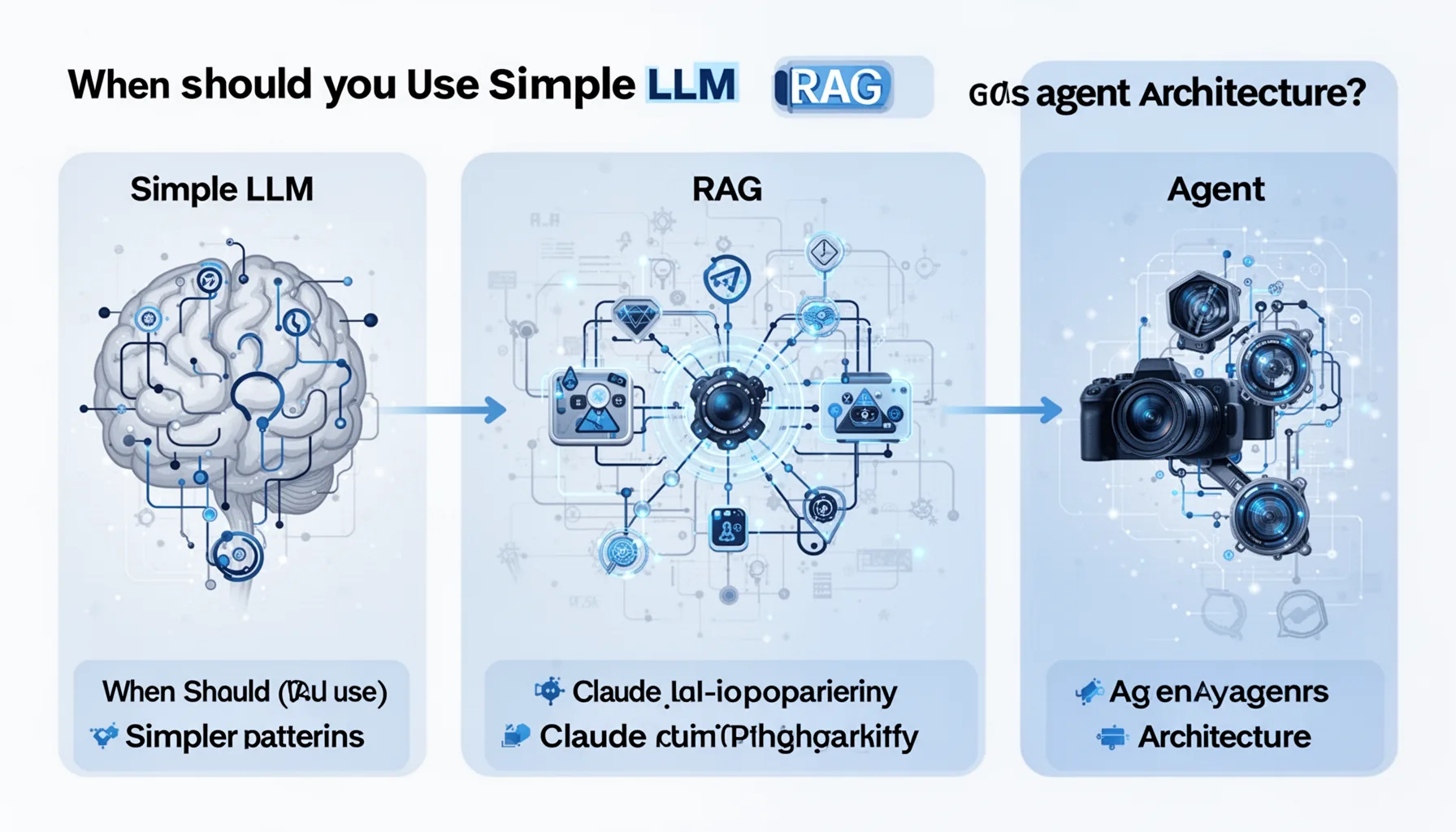

Claude architecture patterns: when should you use simple LLM vs RAG vs agent architecture?

Start with a simple LLM. Shift to RAG when the model needs outside knowledge. Move to agents only when the job truly demands multi-step action and judgment. Simple enough. That's the cleanest rule we can give, and honestly, most teams should pin it beside the monitor. Anthropic's Claude guidance has kept stressing clear task scope, solid tool definitions, and disciplined evaluation, not agent theater for its own sake. A plain LLM call usually works best for rewriting, summarization, classification, and a lot of coding help where the needed context fits in the prompt. RAG earns its place when answers rely on company docs, support rules, or facts that change often and need citation. And agent workflows fit when the system must pick tools, order actions, inspect outputs, and recover from mistakes across steps. We'd argue the industry's biggest architecture blunder isn't building too little. It's piling on retrieval and orchestration before the base task has earned them. Worth noting: that's exactly where teams like Notion have had to get more disciplined.

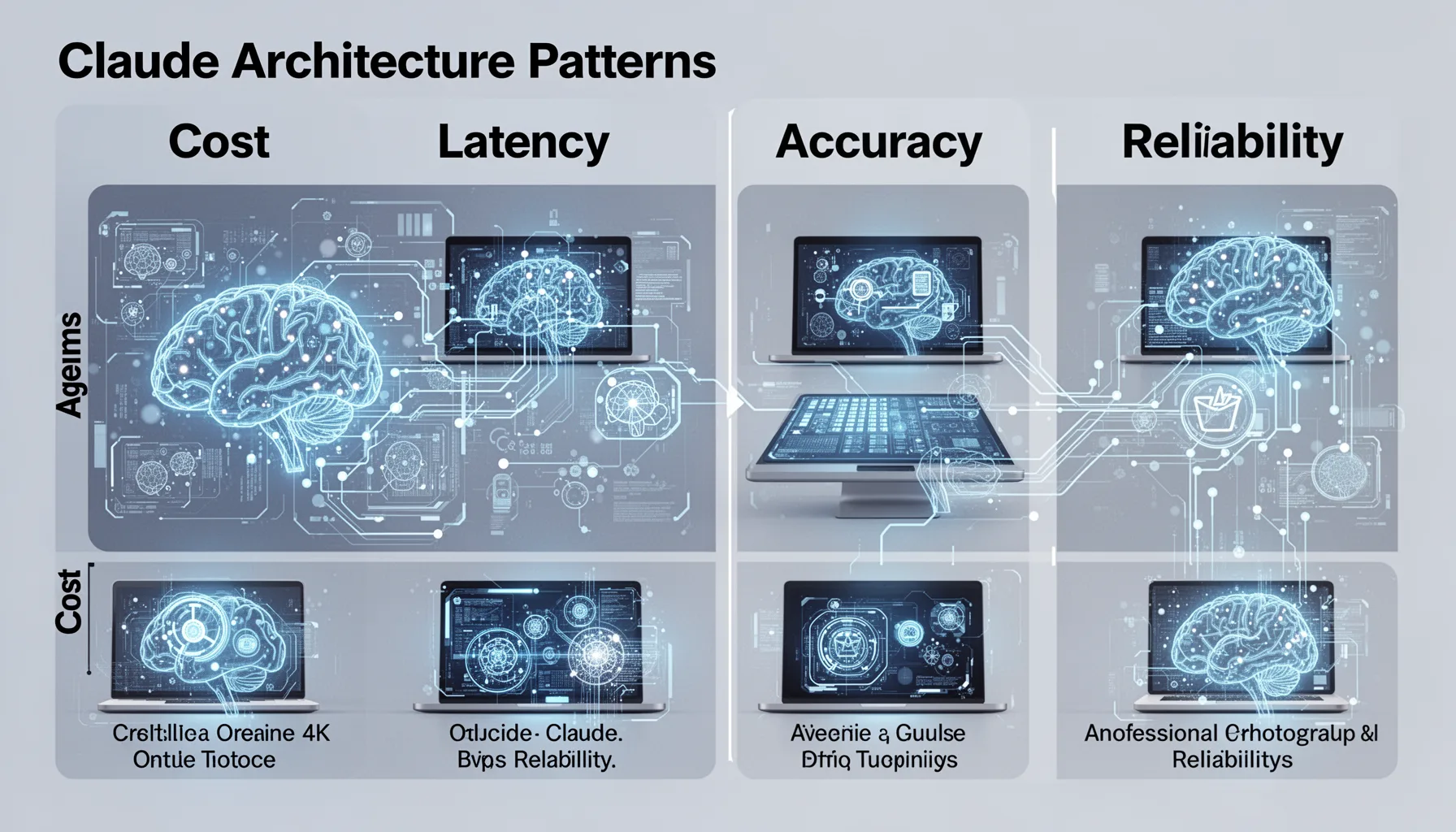

How Claude architecture patterns change cost, latency, accuracy, and reliability

Claude architecture patterns vary a lot on cost, latency, accuracy, and reliability, and those gaps should steer design choices far more than trend-chasing. Here's the thing. Every extra subsystem creates another place where the user experience can crack. Simple LLM calls are usually the cheapest and quickest because they skip vector search, rerankers, tool routers, and orchestration loops. RAG often lifts factual accuracy on domain-heavy questions, but only when chunking, retrieval quality, and context assembly are handled well; weak retrieval can make a strong model look oddly clueless. Not quite. Agent systems can handle richer work, like triaging a support case, checking policy, opening a ticket, and drafting a reply, yet every tool call adds latency and expands the error surface. OpenAI, Anthropic, and LangChain all published 2024 guidance that points to evaluation, tracing, and tool reliability as core operational concerns in agent stacks. My take is blunt: if your SLA matters and your workflow isn't genuinely multi-step, agent architecture often acts like a tax dressed up as sophistication. That's a bigger shift than it sounds. Look at Zapier-style automations and you'll see why.

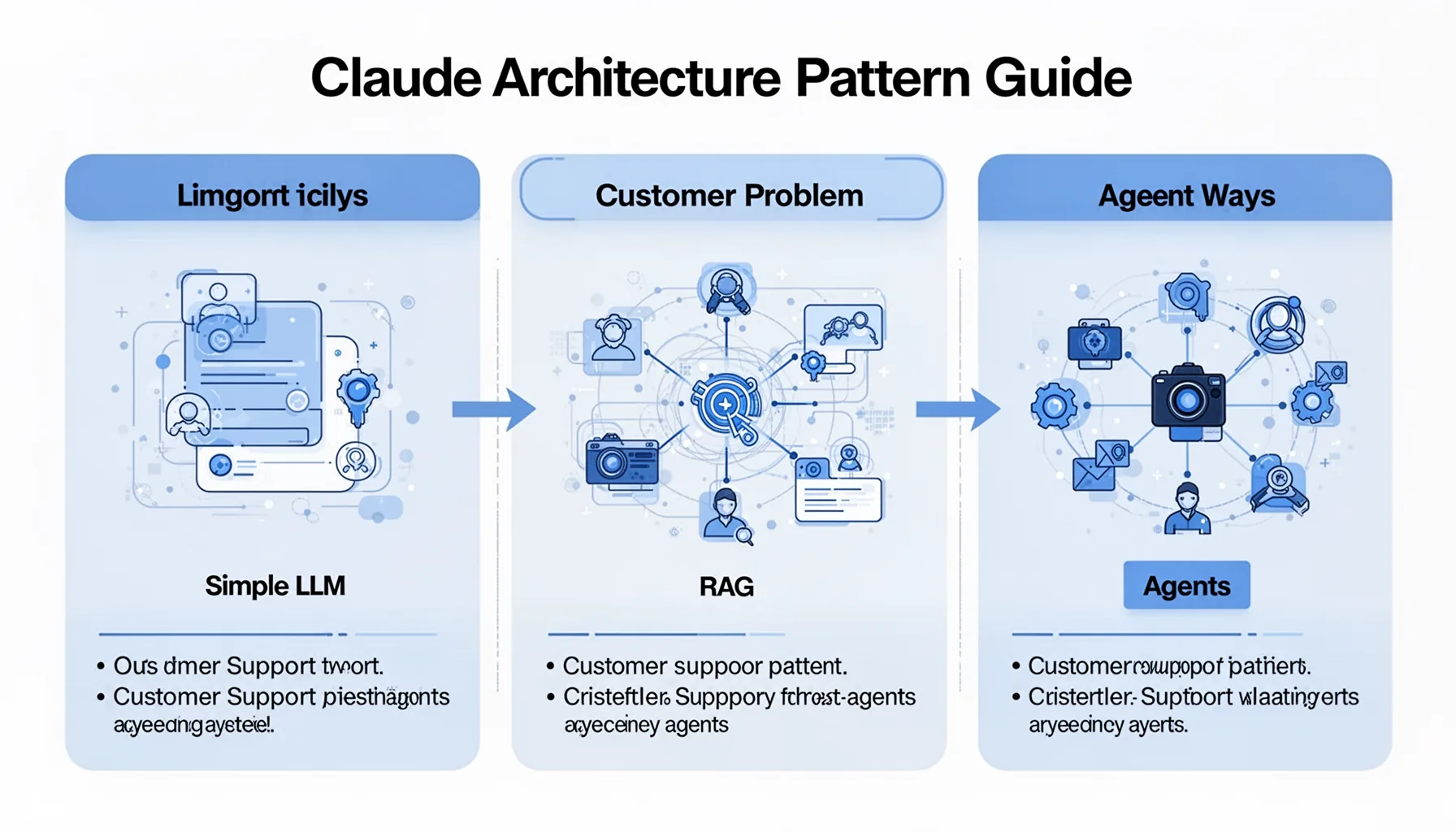

Claude architecture pattern guide using one business problem solved three ways

One customer-support policy assistant makes the tradeoffs between a simple LLM, RAG, and agents pretty easy to see. Imagine a SaaS company like Intercom building an internal helper for support reps who handle refund exceptions. In the simple LLM version, the rep pastes in case details and asks Claude for a suggested response. Fast. Cheap. But it can miss the latest policy if the prompt doesn't include it. In the RAG version, the system pulls current refund rules, escalation criteria, and product-specific edge cases from a document index, which usually improves consistency and auditability. In the agent version, Claude doesn't just read policy. It also checks the CRM, reviews account tenure, opens the billing tool, and drafts the reply with reason codes attached. That sounds slick, and sometimes it is, but it also creates far more failure points across permissions, tool errors, and state handling. We think this example points to the central truth of Claude architecture patterns: the best design is the one that meets the business need with the fewest moving parts. Worth noting. Salesforce teams run into this exact tradeoff all the time.

When to use RAG vs agents: thresholds, anti-patterns, and migration triggers

RAG makes sense when knowledge freshness drives answer quality, and agents make sense when the system must take or coordinate actions across tools. The threshold isn't philosophical. It's operational. If a task needs fewer than two outside lookups and no autonomous sequencing, a well-built RAG flow usually beats an agent on latency, cost, and maintainability. And one anti-pattern keeps showing up: agent-first design for internal knowledge search, where a plain retrieval stack would answer faster and with fewer weird failures. Another anti-pattern looks different. Teams keep stuffing bigger and bigger prompts into a simple LLM flow when retrieval would cut token waste and improve grounding. Good migration triggers are usually easy to spot: rising hallucination rates on proprietary questions point to RAG, while user demand for execution, approvals, and cross-system workflows points to agents. We'd also keep this supporting article under the pillar on Claude Code Auto Mode and AI Coding Tools, topic ID 360, and pair it with sibling coverage on Claude tool use, evaluation loops, and coding workflow automation. That's a bigger shift than it sounds. GitHub Copilot users have learned similar lessons the hard way.

Step-by-Step Guide

- 1

Start with the narrowest useful task

Pick one business problem with a measurable success condition, such as drafting a support answer or summarizing a code diff. Avoid vague goals like 'build an AI assistant for the whole company.' Tight scope reveals quickly whether a simple Claude call already clears the bar.

- 2

Measure baseline simple LLM performance

Run the task with a plain prompt before adding retrieval or tools. Track accuracy, latency, cost per task, and failure types on a fixed eval set. You need this baseline, or every future upgrade will feel better without proving it is better.

- 3

Add retrieval only when knowledge gaps appear

Introduce RAG when failures stem from missing or stale proprietary information. Build the retrieval layer carefully: document cleaning, chunk size, metadata, reranking, and citation formatting all matter. Bad retrieval can lower quality even when the model itself is strong.

- 4

Introduce agents for real action chains

Move to an agent pattern only when the workflow needs tool choice, sequencing, retries, or state over multiple steps. For example, if Claude must read a ticket, fetch account status, consult policy, and draft a response, orchestration starts to make sense. If it just needs one lookup, don't overbuild it.

- 5

Set migration triggers and kill switches

Define numerical triggers before launch, such as hallucination rate above a threshold, average latency above target, or tool failure rate beyond tolerance. Also define rollback paths. Teams that skip kill switches often trap themselves inside expensive architectures they can't simplify under pressure.

- 6

Evaluate continuously with production-like tests

Use offline evals, shadow traffic, and human review to compare simple LLM, RAG, and agent versions on the same task. Test for reliability, not just best-case output quality. What wins in a polished demo can lose badly when real users send incomplete, messy, contradictory inputs.

Key Statistics

Frequently Asked Questions

Key Takeaways

- ✓Start with a simple LLM flow unless retrieval or tool orchestration is plainly required.

- ✓RAG earns its keep when answers rely on current, proprietary, or traceable company knowledge.

- ✓Agents fit multi-step tasks, but they also raise latency, cost, and failure modes.

- ✓Migration triggers matter more than hype because overengineering can hurt reliability and budgets fast.

- ✓One business problem solved three ways usually reveals tradeoffs better than abstract architecture diagrams.