⚡ Quick Answer

Workflow optimization for LLM agents is the discipline of improving how agent systems plan, route, execute, verify, and adapt work across tools, models, and memory. The newest research argues that dynamic runtime graphs for AI agents outperform static templates when tasks, tools, and constraints change during execution.

Workflow optimization for LLM agents has jumped from a niche research thread to a core systems question for enterprise AI. And it's happening fast. A new survey on dynamic runtime graphs and executable workflows arrives at exactly the right time, because plenty of teams still build agents as fixed prompt chains even as production tasks shift under their feet. That's a bigger shift than it sounds. We think that gap explains why so many demos seem sharp, then fold under ordinary operational pressure. Static templates are easy to sketch. Real agent work isn't. It's messy, stateful, and full of surprises.

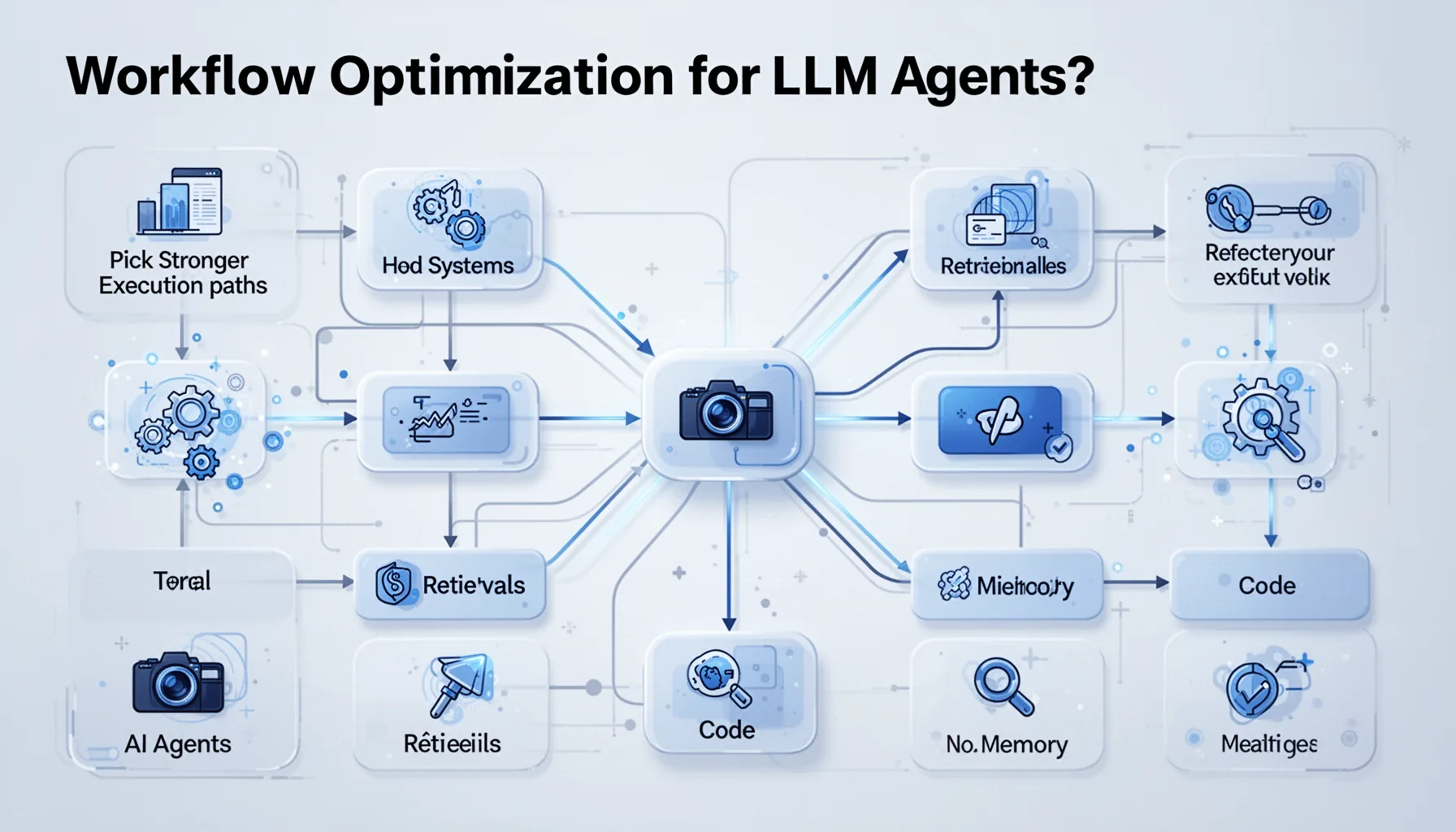

What is workflow optimization for LLM agents?

Workflow optimization for LLM agents means designing agent systems that pick stronger execution paths across LLM calls, retrieval, tools, code, memory, and verification. In practice, that makes orchestration a first-class engineering problem, not just a thin wrapper around prompts. The arXiv survey described in topic 367 frames modern agents as executable workflows rather than isolated model calls, and we think that's the right lens. Worth noting. According to LangChain's 2024 agent engineering guidance and Microsoft AutoGen design patterns, multi-step agents usually need planning, tool routing, and self-check stages to hold quality across longer tasks. That's not academic hair-splitting. If a support agent queries a CRM, drafts a response, checks policy, and updates a ticket, each transition can fail or branch, so the workflow itself becomes the product. We can already see that in enterprise copilots built on OpenAI tool calling, Anthropic's tool-use patterns, and orchestration layers like Temporal. For deeper subtopics, readers should pair this pillar with supporting pieces on topic 358, 369, 370, and 372.

Why dynamic runtime graphs for AI agents beat static templates

Dynamic runtime graphs for AI agents beat static templates because they let systems change execution paths while work is underway. Static chains still have a place for narrow tasks, but they crack when an agent has to recover from weak retrieval, unavailable tools, or conflicting evidence. The survey's distinction between static templates and dynamic agent graphs echoes what engineers already know from distributed systems: fixed flows stay predictable, adaptive flows stay resilient. That's the real story. IBM researchers and Stanford's HAI community both pointed out in 2024 discussions that agent performance often depends less on model size than on decomposition, verification, and routing quality. Here's the thing. A runtime graph can branch to a verifier node, skip an expensive tool call, or re-plan after a failed API request without restarting the whole job. Consider a software agent working with GitHub, a unit test runner, and a documentation retriever; frameworks such as OpenHands and AutoGen now model those steps as conditional graphs instead of strict sequences. We'd argue this is the architectural shift worth watching, because dynamic graphs push agents closer to workflow engines and farther from brittle prompt scripts.

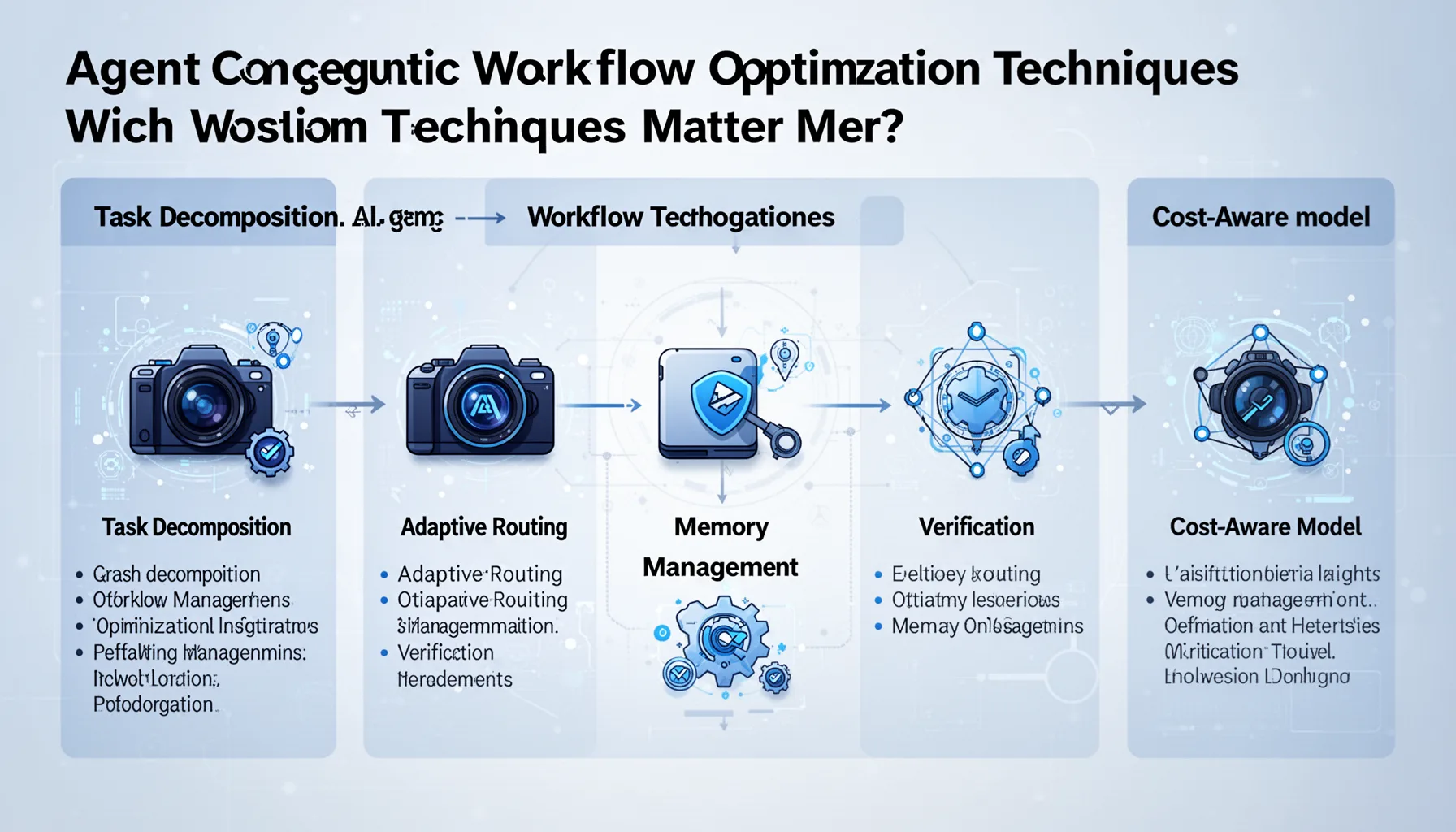

Which agentic workflow optimization techniques matter most?

The most consequential agentic workflow optimization techniques are task decomposition, adaptive routing, memory management, verification, and cost-aware model selection. Those five keep surfacing because agent failures usually come from coordination problems, not just weak reasoning. The survey maps workflows that interleave retrieval, code execution, and memory updates, which lines up with patterns in Devin-style coding agents, CrewAI pipelines, and enterprise RAG stacks. Not quite a prompt problem. According to a 2024 Stanford report on foundation model systems, retrieval and tool-use stages can materially shift benchmark outcomes even when the base model stays the same. So the argument over prompts versus models misses the point. Teams should tune branching logic, observation loops, retry policies, and evaluator nodes with the same seriousness they bring to database tuning. A concrete example is Shopify's public work on AI-assisted commerce tooling, where model calls solve only part of the job while business rules, context assembly, and validation handle the rest. In our analysis, the best orchestration stacks treat the runtime graph as a measurable system, with latency, accuracy, and policy metrics at every node.

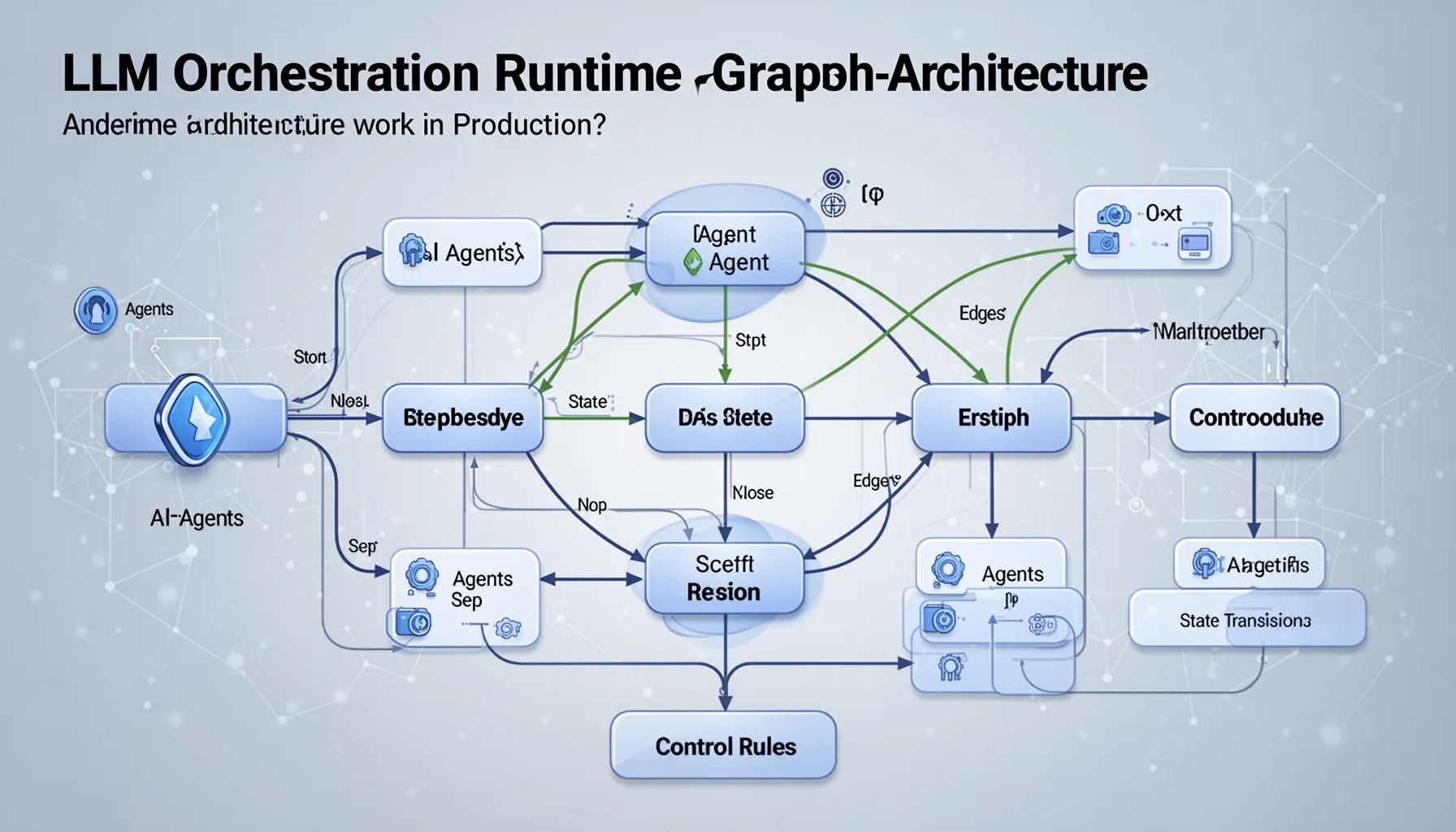

How does LLM orchestration runtime graph architecture work in production?

LLM orchestration runtime graph architecture works by representing each step of agent execution as nodes, edges, state transitions, and control rules. That sounds abstract. But it's pretty close to how mature workflow platforms like Temporal, Prefect, and Apache Airflow have long handled retries, branching, and observability for non-AI workloads. The difference is that LLM agents bring probabilistic outputs, external tools, and changing context windows, so the graph has to track uncertainty along with state. Simple enough. A production design usually includes a planner, a context builder, tool executors, a memory layer, a verifier, and a policy gate such as the one discussed in supporting topic 372. Microsoft reported in 2024 Azure AI orchestration materials that enterprise teams increasingly want audit logs, deterministic checkpoints, and human approval nodes for sensitive agent actions. That's a clue worth keeping. If your runtime graph can't explain why the agent picked a branch, reused memory, or invoked a tool, governance turns into an afterthought instead of a control surface. We think production agent architecture now looks less like a chatbot stack and more like an event-driven application with a language model tucked inside it.

How static templates vs dynamic agent graphs shape governance and validation

Static templates versus dynamic agent graphs shape governance and validation by changing where control, auditability, and risk actually sit. Static workflows are easier to certify because every path is known in advance, while dynamic graphs need policy-aware branching, runtime checks, and tighter telemetry. That's why this topic sits squarely inside the cluster on AI Agent Governance, Validation, and Workflows. Worth noting. NIST's AI Risk Management Framework and OWASP guidance for LLM applications both stress monitoring, traceability, and controls around tool use, especially when systems can take actions outside the model. A concrete example is a finance operations agent that can fetch invoices, call a payment API, and update an ERP record; with a dynamic graph, each edge needs permissions and each high-risk node probably needs approval logic. Supporting coverage on topic 369 and 370 can dig into validation and governance details, while topic 372 covers the enforcement layer directly. Our view is blunt. Optimization without control becomes a reliability problem first, then a security problem right after.

Step-by-Step Guide

- 1

Map the workflow as a graph

Start by drawing your agent as nodes and edges, not as a single prompt or a linear chain. Include planning, retrieval, tool calls, memory writes, verification, and human checkpoints. This simple move exposes hidden failure points early. It also gives teams a shared artifact for governance reviews.

- 2

Instrument every execution path

Capture latency, cost, tool success rates, branch frequency, and final outcome quality for each node. Use traces from tools like LangSmith, OpenTelemetry, or custom event logs. You can't optimize what you can't see. And agents are especially good at hiding expensive detours.

- 3

Add adaptive routing rules

Route tasks to different models, tools, or sub-agents based on complexity, confidence, and policy class. Keep simple work on cheap models and reserve stronger reasoning loops for hard cases. This saves money without blindly cutting quality. It also reduces avoidable tool chatter.

- 4

Insert verification and rollback gates

Place evaluator nodes after risky actions such as code execution, database writes, or outbound API requests. Add rollback or retry logic when checks fail. Think of this as transaction safety for agent systems. It won't solve every error, but it sharply limits blast radius.

- 5

Constrain tools with policy controls

Wrap sensitive tools behind explicit permission checks and scoped credentials. Open source policy proxies and internal gateways can stop agents from making unsafe calls even when prompts drift. This is where supporting topic 372 fits neatly. Runtime freedom should never mean unchecked access.

- 6

Review graph performance continuously

Treat the workflow as a living system and revisit branch logic as tasks, tools, and models change. Compare static templates against dynamic runtime paths on real workloads, not toy demos. The survey's broad lesson is practical: orchestration quality compounds over time. Teams that tune their graphs usually outpace teams that only tune prompts.

Key Statistics

Frequently Asked Questions

Key Takeaways

- ✓Workflow optimization for LLM agents is shifting from fixed chains to adaptive execution graphs.

- ✓Dynamic runtime graphs let agents re-plan around failures, tool latency, and new evidence.

- ✓The best LLM agent systems combine routing, verification, memory, and policy enforcement.

- ✓Enterprises should treat orchestration as architecture, not prompt engineering with extra steps.

- ✓This survey is a strong pillar resource for agent governance, validation, and workflow design.